Everything that occurs is a change in state, or an event for short: the sun comes out, the bus arrives, plants emerge from the ground, a neighbor says hello, the calendar page turns to a certain date or time, or your team lead yells that you should get to da choppa. Among all events, the ones that must be observed, recorded, or otherwise acted upon can be categorized based on how timely the reaction needs to be.

Realtime is the ability to react to anything that occurs as it occurs, before it loses its importance.

In a business context, everything that occurs in a system is an event, and realtime is the timely extraction of business value from events with very small windows of opportunity, or deadlines.

Any changes to data or otherwise interactions with the system by a human or machine agent can be considered an event with business value, and be recorded into a log for future processing. However, they may also, upon examination, be categorized as having value in an immediate sense, to be processed right on the spot.

For example, the value may lie in providing immersive live functionality to external users such as live document collaboration; or, internally, in allowing a more instantaneous understanding of the system like with monitoring and processing streams of emergency data.

Check out The Periodic Table of Realtime ⚗️: an interactive resource for developers that are just starting out in the world of realtime or looking to upscale and enhance their event-driven skills.

Realtime Use Cases and Examples

Computer systems generally fall into one of three categories:

- Transformational: sequential input, process, output; each iteration is independent of any other and terminates after issuing the output; minimizing processing speed is a COULD.

- Interactive: transformational but with output looped back into input making the next input dependent on the content output; repeats until input terminates; minimizing processing speed is a SHOULD.

- Reactive: interactive but with an explicit time constraint; minimizing processing speed is a MUST, with an automatic assumption of instantaneity of reaction.

Interactive systems can be very fast, and can sometimes be used to attempt to simulate realtime. However, realtime systems by definition fall squarely within the third category which has a substantial number of its own use cases. Realtime uses and experiences come in many flavors. Here’s just a small sample:

- Event streaming and stream processing

- Realtime applications

- Synchronization of data, devices, components, services, and systems

- Data visualizations and analytics dashboards

- Educational experiences

- System monitoring

- Asset tracking

- Realtime banking (incl. fraud detection and payment processing)

- Transport and logistics data

- Embedded chat with presence

- Live visual collaboration

- Market data

- Smart automation and Internet of Things (IoT)

- Realtime APIs

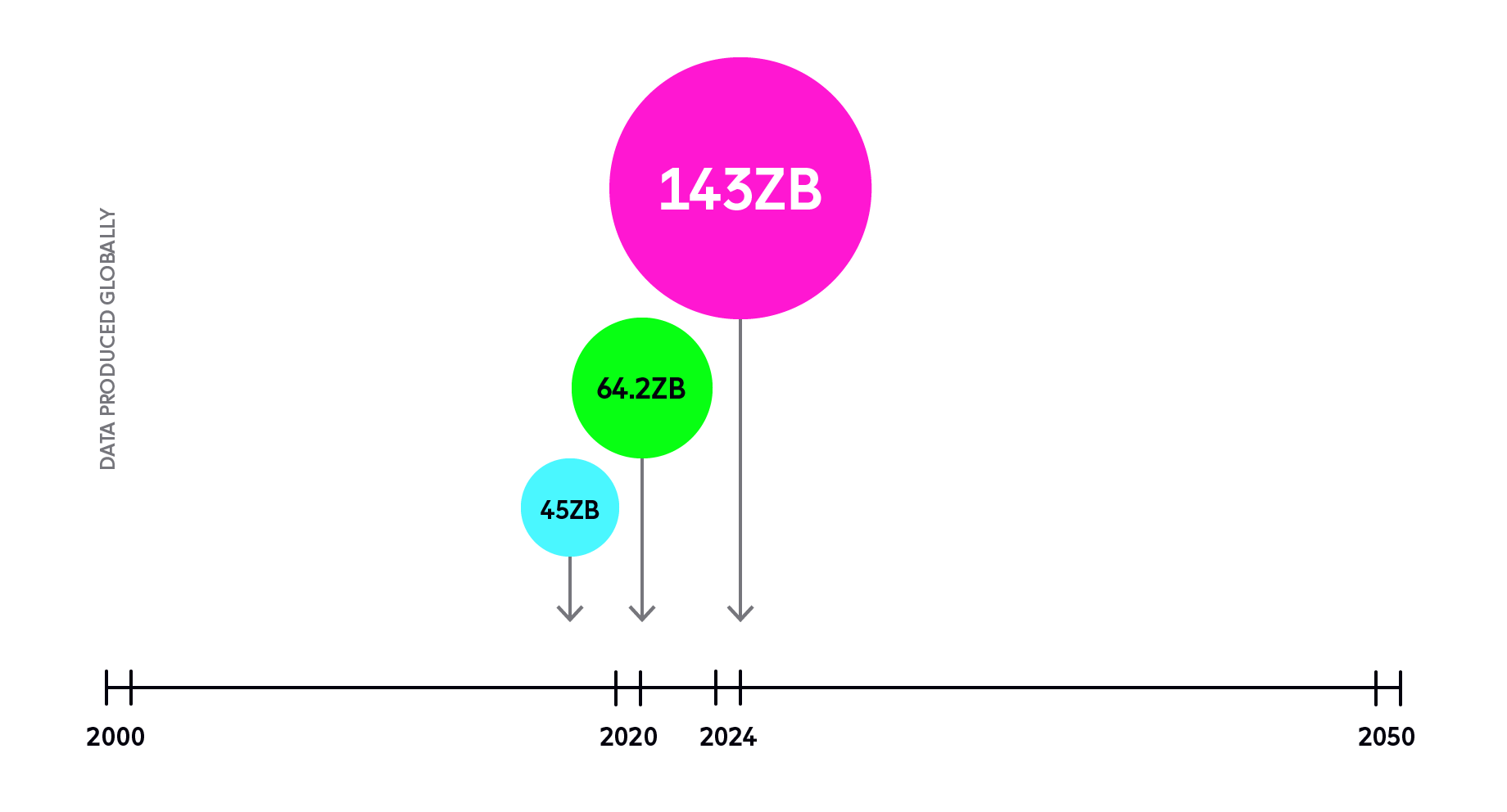

The list is far longer than this, and growing in tandem with the increasingly insatiable consumption of data.

Source: Worldwide Global DataSphere Forecast, 2020–2024: The COVID-19 Data Bump and the Future of Data Growth, IDC Doc #US44797920 (April 2020)

Let’s take a brief look at a couple examples of realtime data being leveraged to business ends.

Realtime applications

End-users and business leaders increasingly demand realtime experiences. They are often digital natives, and they expect digital experiences to be interactive, immersive, responsive, and immediate. They need realtime reports of state changes to base executive decisions on, now. They want smooth collaborative environments without lag or edit conflicts like with Figma. Or they seek dependable interactive educational experiences. Or geolocation (including asset tracking). Or virtual events like Hopin.

Delivering smooth, seamless experiences dependably to mobile apps at global scale requires overengineering everything from data centers to consensus-resolution algorithms. For example, you have to be able to provision enough redundancy to provide availability, but also to design your system fault tolerant to provide reliability. You also have to make protocol choices and architectural design choices that suit your and your users’ purposes.

These are hard engineering problems that require solving in order to make realtime come to life for your users. However, the end result is worth it because the demand for realtime is only growing and consumers reward responsive technologies they’re already used to.

Solving these problems for developers is part of Ably’s mission.

Distributed Data Streaming and Stream Processing

Event streaming is a particular case of realtime message processing. It involves delivery and processing of sequences of ordered and persisted messages (events) as they go by, instead of one at a time. This is commonly used for live transformations, filters, joins, and other live, on-the-fly calculations useful in monitoring any system, be it internal logging or social media. One example could be counting how many social media mentions of a particular string occur in a 1-minute sliding window. Or finding events with higher frequency than historic data.

Many vendors and implementations of data streaming and stream processing exist including Apache Kafka, Amazon Kinesis, Google Cloud Pub/Sub, and Azure Event Hubs. for the former; and Apache Beam, Apache Flink, Amazon Kinesis Data Analytics, Google Cloud Dataflow, and Azure Stream Analytics for the latter.

You can read more about them on our blog post about stream processing platforms.

A dependable/reliable system tasked with event streaming and stream processing should be able to handle the following:

- Backpressure

- Exactly-once processing semantics

- Persistence of messages

- Ordering of messages

- Reading the stream from a specific point in time.

Realtime must-haves

Events or messages can be transmitted from their source to the interested parties in any number of ways, including traditional request-response communication systems. Realtime events, however, carry with them several requirements, low latency and scalability, and event-driven architectural choices chief among them.

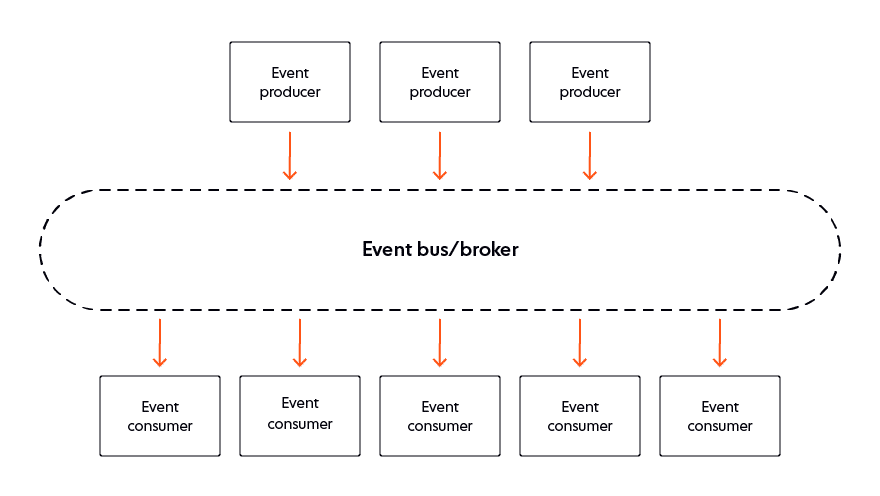

Low latency comes mostly from geographic proximity which — for solutions that claim global reach — requires managed data centers and balancing algorithms across the planet. Scalability is helped along by a well-designed message broker positioned between senders and receivers, in accordance with the publisher/subscriber (pub/sub) architectural pattern. This is the most common design pattern used in the realtime realm. Additionally, the whole thing is optimized for handling events by using event-oriented protocols such as WebSocket, SSE, MQTT, and AMQP.

These are just some of the aspects to consider when thinking about realtime. However, at Ably, we extend all of the above to include the requirements we believe everyone should demand from their realtime providers:

- To occur below the 100 ms threshold of human perception. (imperceptible to most humans).

- To be live and synchronous like a conversation or a team interaction.

- To be event-driven instead of sequential (request-response).

- To support the handling of event streaming.

- To offer stream continuity/resume, message ordering, idempotency, and data integrity.

- To operate globally, beyond the edge of the local network.

- To be able to handle both server-initiated (push) and client-initiated (pull) notifications.

- To be dependable/reliable at massive scale (the kind of scale that makes your cloud vendor concerned).

At the very least, dependable realtime platforms should offer the following features.

Performance

Predictable latency even when operating under unreliable network conditions, such as that of a WAN.

For example, Ably’s round trip latency from any of its 700+ PoPs globally that receive at least 1% of its global traffic is 6.5ms for the 99th percentile.

Reliability

Reliability through infrastructure redundant at the regional and global level to survive multiple failures without outages. For example, Ably has 99.999999% message survivability for instance and datacenter failure.

Availability

Availability is uptime. However, apart from at least a 99.999% uptime SLA, a realtime service should be designed and battle-tested for extreme scale and elasticity.

For example, Ably includes a 50% global capacity margin for instant surges and the ability to double capacity every 5 minutes.

Integrity

Integrity for message ordering and delivery, including exactly-once processing.

Realtime Applications Architecture and Design Patterns

Interactive architectural approaches such as service-oriented architecture (SOA) generally aim to process all events as quickly as possible. However, some traditional constructs such as the request-response pattern can be an anti-pattern when trying to react to ephemeral events not just quickly but reliably and at massive scale. This is where event-driven architecture (EDA) comes into play. It treats events as first-class citizens and can thus complement traditional approaches when trying to handle the hard engineering problems arising from realtime requirements.

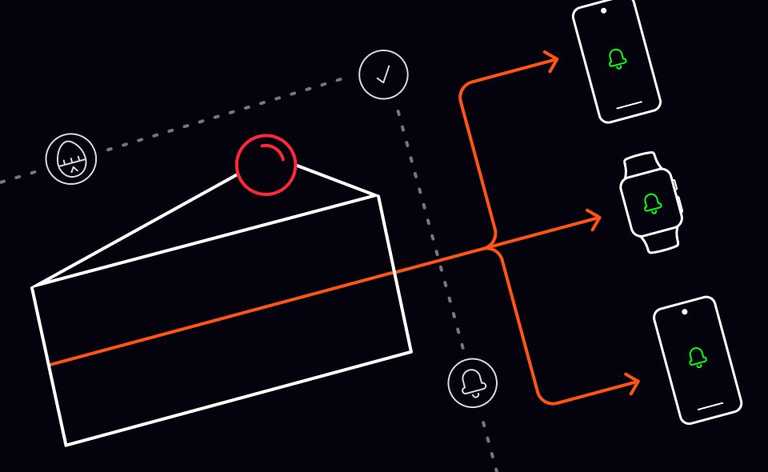

For example, one aspect of EDA allows subscribers to listen for events without constant polling (requesting) and repolling. Close to 100% of “are we there yet?” questions have no value. Removing the necessity of asking questions with no answer massively reduces the communication overhead. This in turn paves the way for connecting a much larger possible number of simultaneous listeners who simply subscribe to the channels that concern them and can relax knowing they will get informed in realtime, as the information comes in. The decoupling also means the producer of the information no longer has to take care of individually informing every single subscriber of every event, one by one. Instead, they can all receive the alert/call-to-action simultaneously, then go off to take care of their piece independently, in parallel.

Another aspect of realtime to consider is the fact that deadlines — or windows of opportunity — can be categorized by how catastrophic the consequences of missing a deadline would be, from hard to soft. Hard realtime means missing a deadline cannot be tolerated under any circumstances. Soft realtime means the quality of service merely degrades as the clock spins past the deadline.

Generally, web technologies do not provide suitable dependability for hard deadlines, but are sufficient for medium or soft deadlines.

Note: In this respect, Ably is somewhat overengineered, and it treats all deadlines as if they were hard realtime.

Conclusion

There are many events that occur in the course of doing business — customers interact with systems, systems interact with systems — and a significant portion of them carries business value any time between now and some point in the future. Not every event needs to be handled this instant.

However, if that point in the future is mere fractions of a second, after which point the event’s value is lost, then said event has a very short deadline and must be handled in realtime.

Reacting to events in realtime, instantaneously, is something we do in the physical world all the time, like watching ourselves in the mirror, or recovering our balance after (or while) we trip.

Reacting to brief, short-value-window business events in realtime — especially if done dependably and at scale — dramatically shortens the feedback loop, bringing business value now. Engineering a system of such realtime reactions is not trivial, but has tremendous potential in proportion to the growing demand from those who know its value and who have come to expect realtime data, realtime processing, and realtime experiences. No one waits for progress bars.

The two main paths toward taking advantage of realtime are making one’s own, or choosing a vendor. We are one of those vendors, and we have (mostly) solved all those really hard problems. Curious to find out more? Contact our technical experts. They could talk all day about the fun, complicated problems they’ve been solving.