With demand for realtime data growing by the day, more and more organizations are embracing event-driven architectures powered by event streaming & stream processing technologies. In this blog post, we’ll take a look at some of the most popular stream processing platforms, analyzing their strengths and shortcomings.

Stream processing in a nutshell

As a quick definition, stream processing is the realtime or near-realtime processing of data “in motion”. Unlike batch processing, where data is collected over time and then analyzed, stream processing enables you to query and analyze continuous data streams, and react to critical events within a brief timeframe (usually milliseconds).

Stream processing goes hand in hand with event streaming. Let’s now briefly explain what we mean by that. For example, Apache Kafka, a popular open-source pub/sub messaging solution, is primarily used to enable the flow of data between back-end apps and services. You have services publishing (writing) events to Kafka topics, and other services consuming (reading) events from Kafka - all in real time. This is called event streaming.

Additionally, Kafka also provides stream processing capabilities. Kafka Streams is a client library that allows you to write Java and Scala applications that can:

- Consume event streams from Kafka

- Analyze, join, aggregate, and transform them

- Publish the output back into Kafka

Organizations leverage stream processing to make smarter and faster business decisions, obtain realtime analytics and insights, act on time-sensitive and mission-critical data, and build features delivered to end-user devices in real time. Here are some of the key use cases for stream processing:

- Realtime fraud detection & payments

- IoT sensor data

- Realtime dashboards, e.g., medical BI dashboard

- Log, traffic, and network monitoring

- Context-aware online advertising & user behavior tracking

- Geofencing and vehicle tracking

- Cybersecurity

8 stream processing platforms to consider

When it comes to choosing a stream processing solution, there are plenty of options available for you to pick from. Let’s now look at 8 of the most popular stream processing platforms and review their characteristics, strengths, and shortcomings.

Apache Spark

Apache Spark is a unified open-source analytic engine that’s designed for big-data processing on a large scale. The platform runs workloads 100x faster than Hadoop and can process large volumes of complex data at high speed without any hassle.

Apache Spark is primed with an intuitive API that makes big data processing and distributed computing so easy for developers. It supports programming languages like Python, Java, Scala, and SQL.

Spark can run independently in cluster mode. Furthermore, you can integrate with other cluster nodes like Hadoop YARN, Kubernetes, Apache Mesos.

Pros

- It is fault-tolerant

- Supports multiple languages

- Supports advanced analysis

- Performance is very fast

- Easy to do batch processing

Cons

- Steep learning curve

- Consumes a lot of memory

- No in-built caching algorithm

Examples of companies using Spark are Uber, Shopify, Slack, Delivery Hero, and HubSpot.

Apache Spark on G2

Apache Spark on Stackshare

Apache Kafka Streams

Kafka Streams is a stream processing Java API provided by Apache Kafka that enables developers to access filtering, joining, aggregating, and grouping without writing any code.

Being a Java library, it is easy to integrate with any services you are using and turn them into sophisticated, scalable, fault tolerance applications.

Apache Kafka Streams has a low barrier entry. Writing and deploying standard Java and Scala applications on the client-side is very accessible. Additionally, you don’t have to integrate any special cluster manager to keep it running.

Pros

- Integrates with existing applications

- Offers a low-latency value of up to 10 milliseconds

- Reduces the need for multiple integrations

- Serves as a perfect replacement for traditional message brokers.

Cons

- Lacks vital messaging paradigm like point-to-point queues

- Fall short in terms of analytics

- Tends to behave clumsily if the number of queues in a Kafka Cluster increases

Examples of companies using Apache Kafka Streams are Zalando, TransferWise, Pinterest and Groww.

Apache Kafka Streams on Stackshare

Apache Flink

Apache Flink is an open-source stream processing framework that’s developed for computing unbounded and bounded data streams. It can run stateful streaming applications at any scale and execute batch and stream processing without a fuss.

With Flink, you can ingest streaming data from many sources, process them, and distribute them across various nodes.

The interface is easy to navigate and does not need a steep learning curve. It also comes with inbuilt connectors with third-party messaging queues and databases. You can integrate with cluster resources managers like Hadoop YARN, and Kubernetes as well.

Flink can process millions of events per second. Also, it can handle graph processing, machine learning, and other complex event processing.

Pros

- It offers low latency, high throughput

- Simple and straightforward UI

- Dynamically analyzes and optimizes tasks

- Clean datastream API & documentation

Cons

- Integrating with the Hadoop YARN ecosystem can be challenging

- Supports only Scala & Java only

- Limited support from forums and community

Examples of companies using Flink are Zalando, Lime, Gympass, and CRED.

Apache Flink on G2

Apache Flink on Stackshare

Spring Cloud Data Flow

Spring Cloud Data Flow is a microservice-based streaming and batch processing platform. It provides developers with the unique tools needed to create data pipelines for common use cases.

You can use this platform to ingest data or for ETL import/export, event streaming, and predictive analysis. Developers can adopt Spring Cloud Stream message-driven microservices and run them locally or in the cloud.

Spring Cloud Data Flows has an intuitive graphic editor that makes building data pipelines interactive for developers. Not only that, they can always view deployable apps using monitoring systems like Wavefront and Prometheus.

Pros

- Developers can deploy using DSL, Shell, REST-APIs, and Admin-UI.

- Allows you to scale stream and batch pipelines without interrupting data flows

- Great integrations with platforms like Kafka and ElasticSearch

Cons

- Visual user interface could use some improvements

- Features like the monitoring tools need more development

Examples of companies using Spring Cloud Data Flow are Corelogic, Health Care Service Corporation, and Liberty Mutual.

Spring Cloud Data Flow on G2

Amazon Kinesis

Amazon Kinesis Streams is a durable service that aids the collection, processing, and analysis of streaming data in real-time. It is designed to allow you to get important information that's needed to make quicker decisions on time.

You can ingest real-time data from event streams, social media feeds, application logs, and other applications. The streaming platform is fully managed. You can use it to build real-time applications like monitoring user behavior or fraud detection.

Pros

- Easy to set up and maintain

- It can handle any amount of streaming data

- Integrates with Amazon’s big data toolset like Amazon Kinesis Data Analytics, Amazon Kinesis Data Firehose, and AWS Glue Schema Registry.

Cons

- Its commercial cloud service is priced per hour per shard

- Documentation isn’t straightforward

- Offers no support for direct streaming

- The library to consume data (KCL) is very clunky and heavy.

Examples of companies using Kinesis are Figma, Instacart, Deliveroo, and Lyft.

Amazon Kinesis on G2

Amazon Kinesis on Stackshare

Google Cloud Dataflow

Cloud Dataflow is a Google-powered processing platform designed to execute data processing pipelines. With the platform, you can develop simple streaming data pipelines with lower data latency.

Google Cloud Dataflow has a serverless approach that shifts developers' focus to programming instead of managing countless server clusters. It offers an infinite capacity to manage your workloads. And with that, you don’t have to worry about high ownership costs.

Additionally, Cloud Dataflow uses the Apache Beam SDK for MapReduce operations and accuracy control for batch and streaming data. In the long run, it reduces complexities and makes stream analytics very accessible to both data analysts and data engineers.

The framework can be used to develop anomaly detection applications or a real-time website analytics dashboard or a pipeline that processes log entries from various sources.

Pros

- It’s fully managed

- It removes operational complexities

- Minimize pipeline latency

- Provides access native integrations with AI Platform, BigQuery

- Unified stream and data processing analysis

- Real-time AI-powered processing patterns

Cons

- Restricted to only Cloud Datastore service

- BigQuery/DataFlow in streaming mode can be expensive

- Google Content Delivery Network doesn’t work with custom sources

- It’s not suited for experimental data processing jobs

Examples of companies using Google Cloud Dataflow are Spotify, The New York Times, and Snowplow.

Google Cloud Dataflow on G2

Google Cloud Dataflow on Stackshare

Apache Pulsar

Apache Pulsar is a cloud-native, distributed messaging and streaming platform. Originally deployed inside Yahoo, Pulsar serves as the consolidated messaging platform connecting Yahoo Finance, Yahoo Mail, and Flick to data.

Pulsar provides a high-performance solution for server-to-server messaging and geo-replication of messages across clusters. Additionally, it can scale to over a million topics and expand to hundreds of nodes. It's lightweight, easy to deploy, and doesn't need an external stream processing engine.

The processing platform has a multi-layer architecture. Each of these layers is scalable and can be distributed and decoupled from the other. Not only that, it has granular resource management that prevents producers, consumers, and topics from overwhelming the cluster.

Pros

- Easy to Integrate with existing applications

- Low publish latency with strong durability guarantees

- Supports high-level APIs for Java, Go, Python, C++, and C#.

- Built-in geo-aware replication that allows replicating data across data centers in different geographical locations.

- Full end-to-end encryption from the client to the storage nodes

Cons

- It has a small community and forums might not be helpful

- Requires higher operational complexity

- It does not allow consumers to acknowledge message from a different thread

Examples of companies using Pulsar are MercadoLibre, Verizon Media, and Splunk.

Apache Pulsar on Stackshare

IBM Streams

IBM Streams enables you to build real-time analytical applications using Streams Processing Language (SPL) or Python. It’s developer-friendly and enables you to deploy applications that can run in the IBM Cloud.

Streams powers a Stream Analytics service that allows you to ingest and analyze millions of events per second. You can create queries to focus on specific data and create filters to refine the data on your dashboard to dive deeper.Source: IBM Developers

Pros

- End-to-end processing with sub-millisecond latency.

- A comprehensive set of toolkits

- Allows you to link to IDE for collaboration with other applications

- Allows you to use Stream runtime using Java and Python

- Visual-driven interface

Cons

- Creating reports can be overwhelming

- Steep learning curve

Stream processing and Ably

We hope you will find this article helpful in your search for a stream processing engine. There are plenty of options available, each with its advantages and disadvantages, and you must carefully analyze them before choosing the right one for your specific use case.

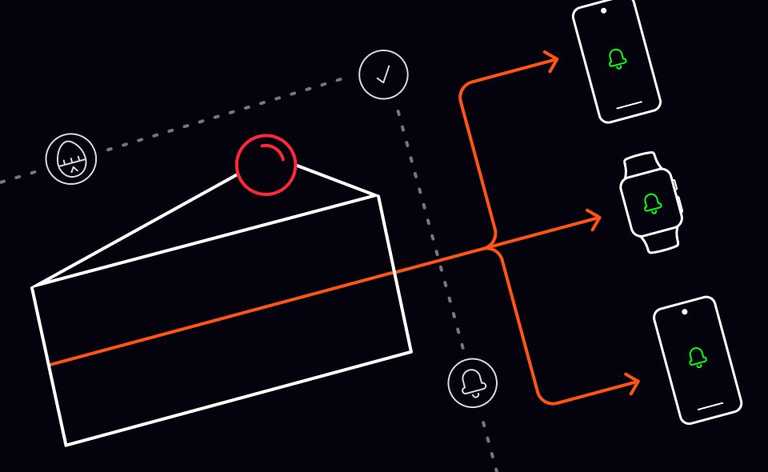

Ably is an enterprise-grade realtime pub/sub messaging platform. We work alongside backend streaming & stream processing solutions, empowering organizations to efficiently design, quickly ship, and seamlessly scale critical realtime functionality delivered to end-users.

For example, we integrate with Kafka Streams, helping Experity deliver critical data to the realtime BI dashboards that enable urgent care providers to drive efficiency and enhance patient care.

Not only can you use Ably to stream events from your backend stream processing engines directly to web, mobile, and IoT clients in realtime, but our platform can also send data the other way into your streaming service - see Reactor Firehose for details.

Ably is mathematically modeled around Four Pillars of Dependability, so we’re able to ensure that events are delivered to consumers with data integrity guarantees at consistently low latencies over a secure, reliable, and highly available global edge network.

If you’d like to find out more about how Ably works alongside stream processing engines, enabling you to build dependable realtime apps, get in touch or sign up for a free account.