The Ably Blog

Notes from the people building realtime

Ably engineering

Latest writing tagged Ably engineering.

Recent posts

Showing 1–12 of 65

Ably engineering

Engineering message appends for AI Transport: three vignettes

Ably engineering

AWS us-east-1 outage: How Ably’s multi-region architecture held up

Realtime experiences

Patterns for building realtime features

Ably engineering

Scaling Pub/Sub with WebSockets and Redis

Ably engineering

Data integrity in Ably Pub/Sub

Ably engineering

Optimizing global message transit latency: a journey through TCP configuration

Ably engineering

Measuring and minimizing latency in a Kafka Sink Connector

Ably engineering

Database generated events: LiveSync’s database connector vs CDC

Ably engineering

Reliably syncing database and frontend state: A realtime competitor analysis

Ably engineering

Overcoming scale challenges with AWS & CloudFront - 5 key takeaways

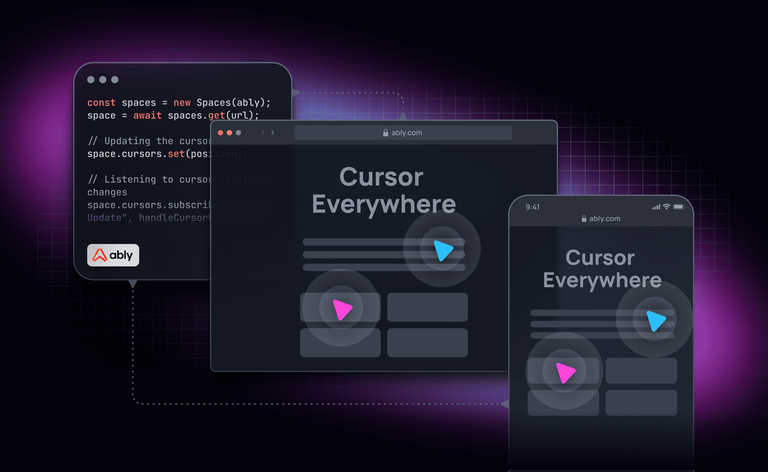

Ably engineering

Cursor Everywhere: An experiment on shared cursors for every website

Ably engineering