What AI Engineer Europe revealed about where production AI is heading

I spoke at AI Engineer Europe last week, and came away with a clearer picture of where the industry actually is right now.

My talk was about why AI user experience breaks at the transport layer. But the bigger takeaway wasn't from my own session. It was from watching what the rest of the room was building, and what problems they were running into.

What stood out to me

Over the past year or two, most AI engineering effort has gone into internal tooling: coding agents, productivity workflows, and internal knowledge search. That work has been genuinely valuable.

But at AI Engineer Europe, I noticed a shift. More teams are building AI experiences for customers, not for themselves. Customer-facing AI agents, support products, and interfaces where real users are doing real work.

When AI moves from internal tooling into products that customers actually rely on, UX becomes load-bearing in a way it wasn't before.

A few themes kept coming up across the talks.

First, chat is evolving into something richer. Ido Salomon and Liad Yosef showed how MCP applications point toward UI pulled directly from real applications users already know: interactive surfaces with bidirectional control, not static components rendered from model output. That felt like a glimpse of where things are heading.

Second, the most sophisticated products are moving beyond chat entirely. Copilots, background agents, and multi-agent systems working together. Legora is a good example of a team already operating at this level.

Third, and this is the one that stuck with me most: quality is becoming the differentiator. Linear's Tuomas Artman made the point that AI increases throughput, which makes taste more important, not less. When everyone can ship fast, what stands out is the quality of what you ship.

I think he's right.

Where I see the experience breaking

In my talk, I focused on a specific failure pattern I've seen repeatedly across more than 40 engineering teams building production AI products.

The default today is direct HTTP streaming. Most frameworks, including the Vercel AI SDK and TanStack AI, use HTTPS Server-Sent Events. The client makes a request to the agent and establishes a persistent connection.

The agent calls the LLM, and the token stream comes back over that connection. It's easy to get working.

The problem is that this architecture assumes one client, one connection, one agent. And that assumption breaks down as soon as you try to build anything richer than a basic chat interface.

Three failure patterns come up consistently.

Resilient delivery. A mobile user walks out of Wi-Fi range. The connection drops, and the tokens the LLM is still generating have nowhere to go. To support resumption, you need to buffer events to Redis and sequence-number them.

Then you need a resume handler that works out what each client missed and replays exactly the right set in order. Every team that needs this builds it from scratch, because the stream is coupled to the connection. When one drops, so does the other.

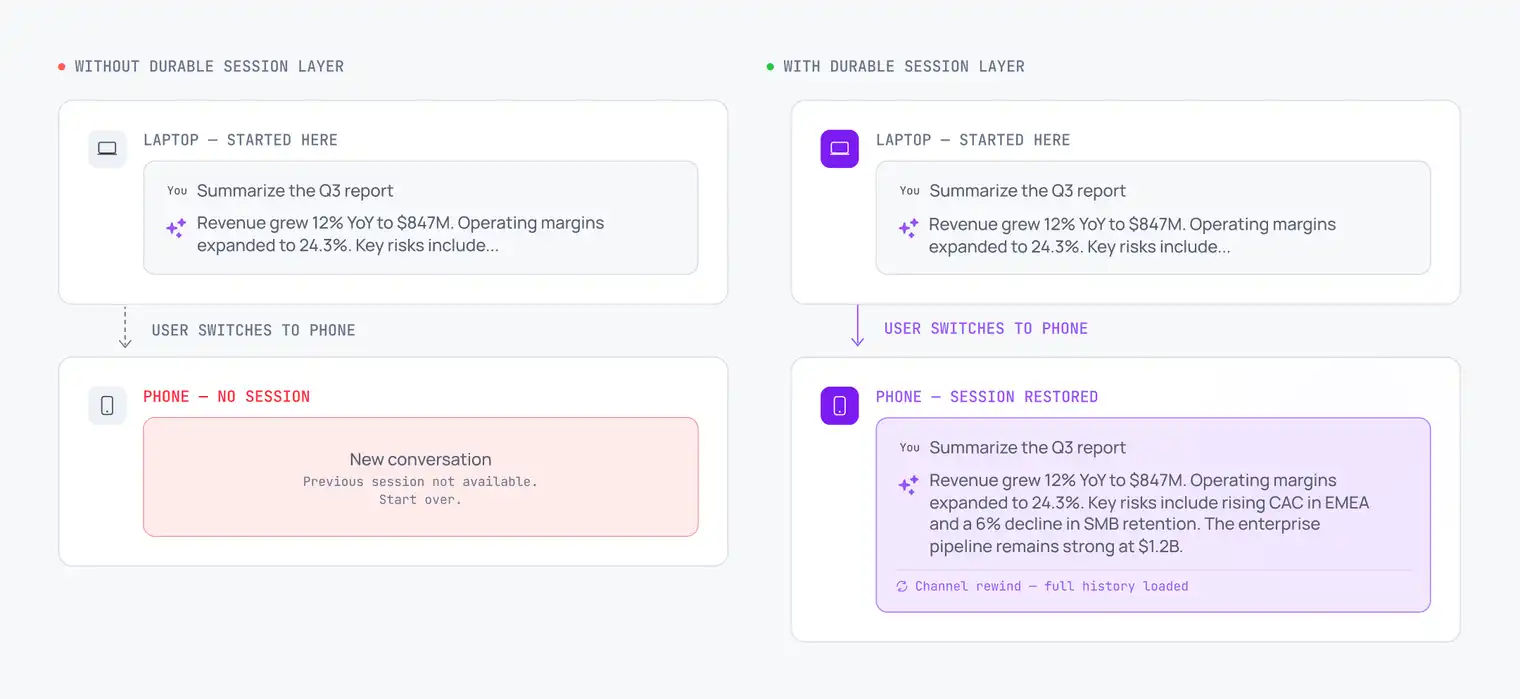

Continuity across surfaces. With HTTP streaming, the connection is a private pipe between the requesting client and the agent. Your other tab can't see it. Your phone can't see it.

The stream exists only for the device that established it. SSE makes this worse: because it's one-way, the client has no way to signal the agent at all. Cancel and resume are mutually exclusive, as Vercel's own docs acknowledge: abort is incompatible with stream resumption when using SSE.

Live control. When users try to redirect or cancel mid-stream, SSE gives them one mechanism: close the connection. The agent then faces an ambiguous signal. Did the user cancel, or did the network drop?

If it buffers for potential resumption, it keeps burning tokens on a user who's gone. If it treats a closed connection as cancellation, resumption breaks. Either way, something goes wrong.

The gap that nobody has fully addressed

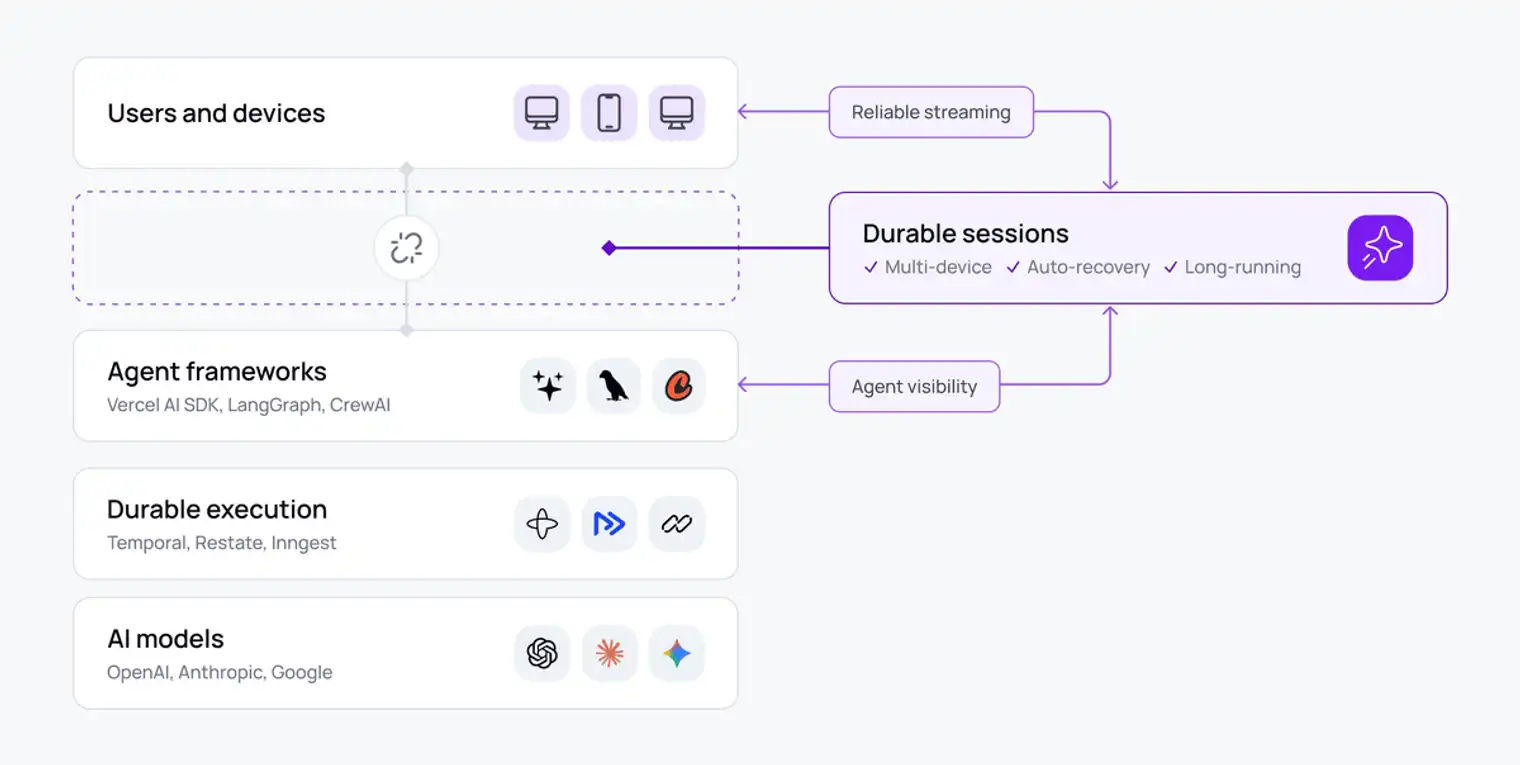

At Vercel's London event earlier this year, I heard Tom Occhino talk about durable execution as the default pattern for resilient agents. And Temporal, Inngest, and Vercel's own Workflow DevKit are genuinely good solutions to the backend resilience problem. But durable execution handles resilience inside the agent. It doesn't address what happens between the agent and the user.

What happens when the HTTP stream breaks on a page reload? What happens when the user picks up their phone mid-session? That's a different layer of the problem, and it mostly doesn't exist yet as solved infrastructure.

Vercel's lead maintainer has said publicly that solving stream resumption requires a persistent channel to the server, with WebSockets as one option. What that points at is a shared, addressable resource that persists independently of any single connection.

In practice, every team that gets serious about production arrives at the same conclusion. They need a Redis buffer between AI backend and client, decoupling generation from delivery.

However, a Redis buffer is only the first problem they solve. Sequencing replays, routing signals from multiple clients, disambiguating cancellation from network drop, surfacing sub-agent activity without relay logic – each of these gets rebuilt independently, on top of infrastructure that was never designed to carry it. The pattern keeps compounding until teams have built, in pieces, what should have been a single addressable layer from the start.

The pattern that's emerging

The concept I'm seeing more teams adopt is durable sessions. It's a stateful layer between agent and client, persistent across connection drops, device switches, and agent crashes.

The agent publishes events to the session independently of any client connection. Clients subscribe and resume from their last received message on reconnect. The session is a shared resource, not a private pipe.

Multiple clients can connect simultaneously and all see the same activity: a second tab, a phone, and a human operator. Because clients hold a persistent connection to the session rather than to a specific agent, they can send signals upstream at any time. Explicit cancel, redirect, and steering: not ambiguous TCP side effects.

In a multi-agent setup, this architecture removes a real structural problem. When every sub-agent publishes independently to the session, the orchestrator can focus on orchestration rather than relaying granular progress updates. The client subscribes to one place and gets full visibility.

At Ably, this is what our channels do. Pub/Sub inherently decouples publishers and subscribers, and channels are independently addressable, persistent, and resumable. We built Ably AI Transport around this pattern: a drop-in SDK for teams building on any AI framework.

What I think this means

The shift from internal tooling to customer-facing AI is already underway. The benchmark for what a production AI experience needs to deliver has changed with it.

Streaming tokens from a model to a screen is a solved problem. What isn't solved for most teams is everything around it: reconnection handling, cross-device continuity, bidirectional control, and visibility into what the agent is actually doing. These are transport-layer problems, and most AI frameworks don't address them.

The teams shipping the best AI experiences right now have all made the same architectural decision: decouple generation from delivery. When that's in place, the session survives reconnects, the stream picks up where it left off, and the user stays in control.

We'll be going deeper on this in an upcoming webinar, showing how durable sessions work in practice and how teams are implementing the pattern. If you're building customer-facing AI and want to see how it applies to your architecture, keep your eyes peeled, or get in touch.