- Topics

- /

- Realtime technologies

- /

- Scaling AWS API Gateway WebSocket APIs - what you need to consider

Scaling AWS API Gateway WebSocket APIs - what you need to consider

Nowadays internet users increasingly expect their online experiences to be interactive, responsive, immersive, and realtime by default. And when it comes to building realtime, event-driven functionality for end-users, the AWS ecosystem is one of the most popular choices available to developers. In this blog post, we’ll cover considerations related to scaling AWS API Gateway WebSocket APIs and the challenges you’ll face along the way.

Note that this blog post does not discuss scaling AWS API Gateway REST APIs; the focus is solely on WebSocket APIs.

AWS API Gateway overview

AWS API Gateway is a managed cloud service that allows developers to create, publish, maintain, secure, and monitor RESTful and WebSocket APIs. Any WebSocket or REST API you create with AWS API Gateway act as a “front door” of sorts. It allow apps to access data and business logic and trigger functionality from your backend services, such as AWS Lambda, Amazon Elastic Compute Cloud (Amazon EC2), Amazon DynamoDB, and Amazon Kinesis.

An AWS API Gateway WebSocket API is a stateful frontend for AWS services and HTTP endpoints. WebSocket APIs support low-latency two-way communication between client apps and your backend. This makes them an adequate choice for realtime use cases like chat apps, streaming dashboards, multiplayer collaboration, and realtime alerts and notifications.

Building serverless architectures with AWS API Gateway WebSockets

By combining Amazon API Gateway WebSocket APIs with AWS services like Lambda, you can build serverless, elastic, realtime apps. Serverless WebSockets appeal because they remove the need for server provisioning or scaling management—and typically follow a pay-per-use model.

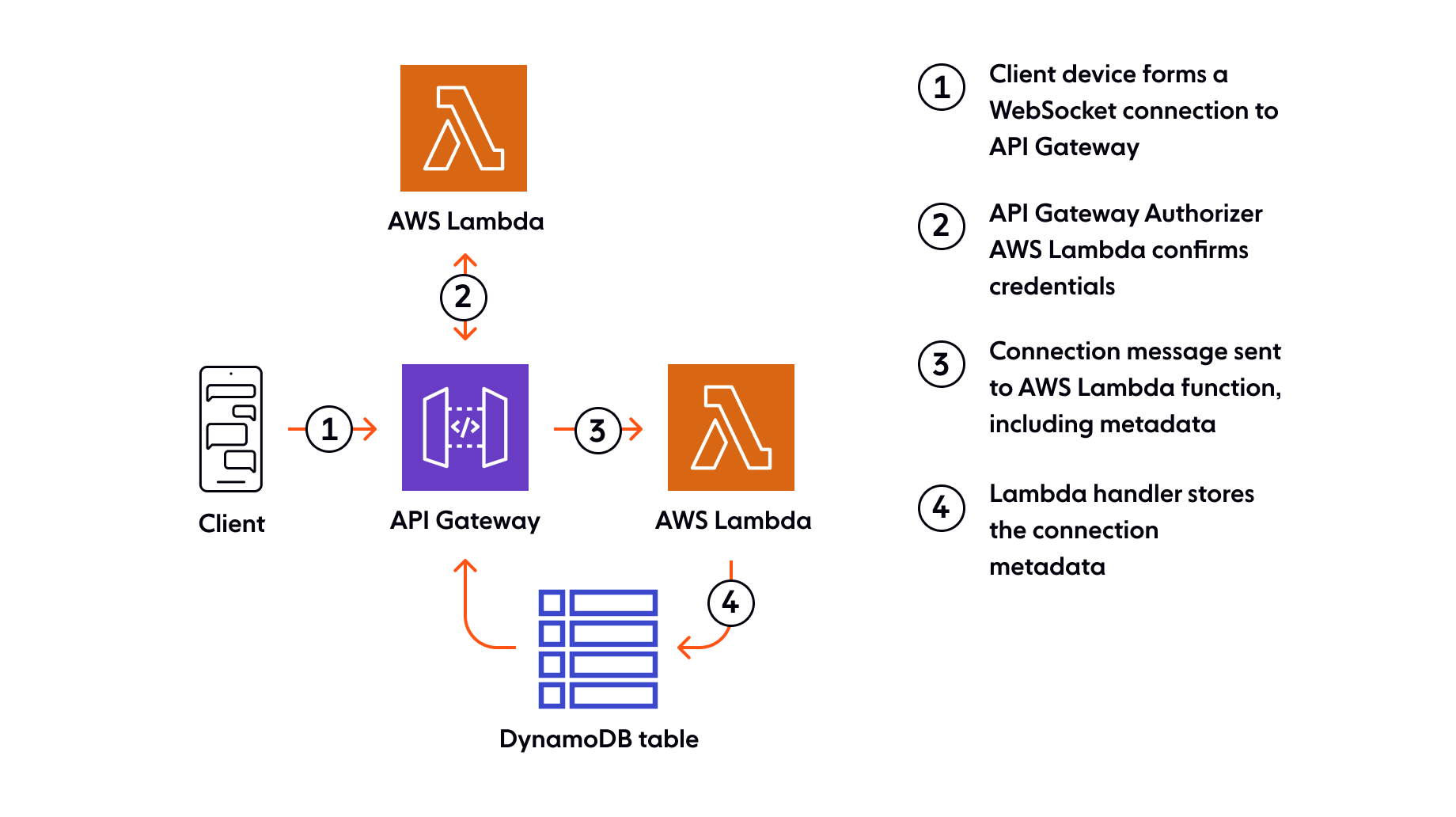

We’ll now look at a basic chat example to see what a serverless architecture with AWS API Gateway WebSockets looks like. First, the sequence by which a client WebSocket connection is made and stored:

Client devices establish WebSocket connections to AWS API Gateway. Each active WebSocket connection has an individual callback URL in API Gateway that is used to push messages back to the corresponding client.

AWS Lambda authorizers confirm that the devices which initiated the WebSocket connections have the correct credentials.

Once authenticated, WebSocket connection metadata is sent to another AWS Lambda function.

This second Lambda function inspects the metadata and stores appropriate information in AWS DynamoDB, a NoSQL document database. This is needed because AWS API Gateway doesn’t store connection state/information itself.

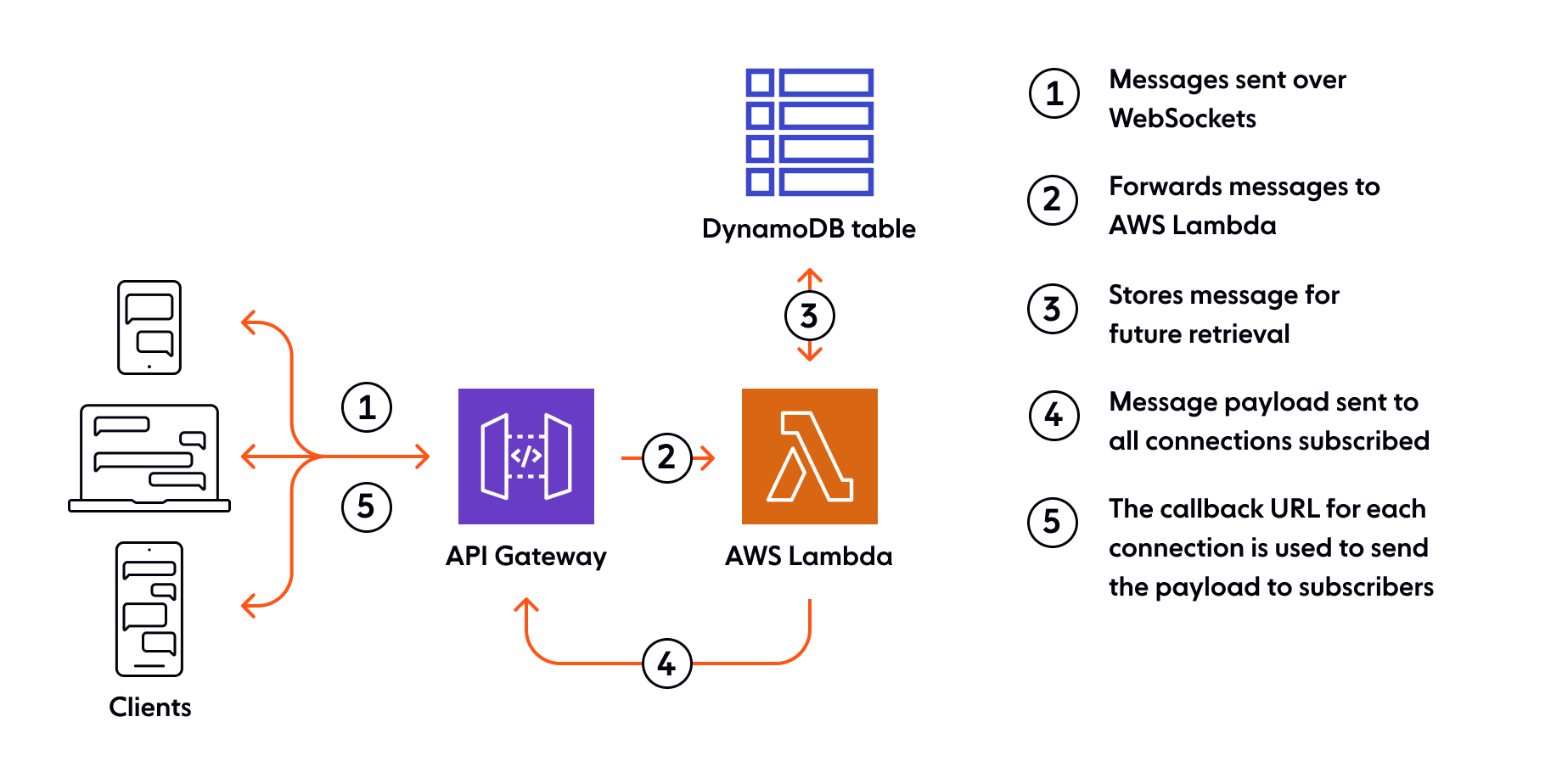

Now let’s consider the point at which a chat user sends a message, and another user receives it:

A client sends a chat message over WebSockets.

On receipt, AWS API Gateway forwards the message to the appropriate AWS Lambda function.

Upon invocation, the AWS Lambda function stores the message in DynamoDB for future retrieval (this is useful, for example, to implement chat history).

The AWS Lambda function checks which WebSocket connections the message should be forwarded to, and sends AWS API Gateway the ID for each of these connections, together with the message payload.

AWS API Gateway uses the callback URLs to send the payload to the appropriate WebSocket clients.

This concludes our basic example of a serverless WebSocket architecture with AWS API Gateway. The question is - how well does it scale?

What are the challenges and limitations of scaling AWS API Gateway WebSocket APIs?

It is well known that managing and scaling any WebSocket solution is a tough nut to crack (primarily because WebSocket is a stateful protocol, with persistent connections). With that in mind, we’ll now cover some of the specific challenges you’ll face if you plan to build and deliver realtime features at scale with AWS API Gateway WebSocket APIs.

Restrictive quotas

There are multiple usage limits imposed by Amazon API Gateway for WebSocket APIs that you need to be aware of, especially if you’re planning to build and deliver realtime features at scale.

First of all, there’s a 10.000 API requests per second throttle quota, with an additional burst capacity of 5.000 requests per second (per region). The quota applies per account, per region. These limits might seem generous, and they’re certainly enough for small and medium-scale projects. However, you can reach these quotas regularly if you have tens of thousands of concurrent users and you’re sending high-frequency updates, especially since you can’t broadcast a message to multiple WebSocket clients with a single API call.

It’s perhaps strange, but AWS API Gateway doesn’t enforce a quota on the number of concurrent WebSocket connections. Instead, there’s a limit on how many new WebSocket connections you can open per second. By default, the limit is 500 connections per second (according to the documentation, this quota can be increased, although it’s unclear to what extent). Assuming clients connect at the maximum rate per second allowed, in one hour you’d reach 1.8 million concurrent connections.

This number of concurrent users is not at all negligible. However, it’s not good enough for certain hyperscale use cases. For example, DAZN streams realtime sports updates (e.g., goals, latest scores) to millions of sports fans around the world. DAZN investigated AWS API Gateway as a potential solution for pushing these realtime updates to their user base over WebSockets. Ultimately, AWS API Gateway was disqualified, as the 1.8 million new WebSocket connections per hour cap was a deal-breaker.

Connection state tracking doesn’t scale well

AWS API Gateway doesn’t manage WebSocket connection metadata, which is why it needs to be stored in a database such as Amazon DynamoDB using a Lambda function to update the store for every connection/disconnection. For large numbers of connecting clients, you’d hit Lambda scaling limits. You could instead use an AWS integration to API Gateway to call DynamoDB directly, but you’ll still need to be mindful of the associated high level of database usage.

Another aspect that we’ve not addressed is that of abrupt disconnection. In cases where a client connection drops without warning, the active WebSocket connection isn’t properly cleaned up. The database will potentially store connection identifiers that are no longer present, which can cause inefficiencies.

One way to avoid zombie connections is to periodically check if a connection is “alive” by sending heartbeats. However, you should closely monitor the effect heartbeats have on your system, and the ratio of heartbeats to actual messages being sent over WebSockets. There are situations when you might find that you are sending more heartbeats than messages (text or binary frames) over WebSockets.

This isn't really impactful in the context of just one connection, but having thousands or even millions of concurrent WebSocket connections with a high heartbeat rate will add significant load on the server layer.

You can’t broadcast messages to connected clients

The ability to broadcast data in a fan-out, 1:many pattern is desirable (if not critical) for many realtime use cases, including:

Streaming live score updates.

Sending traffic updates.

Transmitting financial information, such as stock quotes and market updates.

Distributing news alerts.

Group chat.

These are the types of use cases where pub/sub messaging truly shines, and that’s why plenty of WebSocket solutions out there offer pub/sub capabilities. However, AWS API Gateway is not on this list. There’s no pub/sub messaging, nor is there any other way to natively send a message (the same message) to multiple WebSocket connections with a single API call.

This shortcoming becomes problematic if you have a broadcast use case and you’re planning to build a system with AWS API Gateway WebSockets that can scale to thousands or even millions of users. You would have to send updates in a 1:1 (point-to-point) fashion to each user, which creates significant additional load on the server layer.

In addition, depending on the number of concurrent users and the frequency of updates, you could regularly hit some AWS-imposed limits, like the maximum number of API calls allowed per second (10.000 by default, with an additional burst capacity of 5.000 requests). The higher the number of concurrent users, the higher the chance you’ll be restricted by this limit. In short this leads to:

API request bloat.

Scaling issues (as each message is a separate call).

Possible API quota exhaustion.

It’s hard to make it globally-available

AWS API Gateway is a regional service. The obvious downside of a single-region design is that it negatively impacts the overall latency, reliability, and availability of your system.

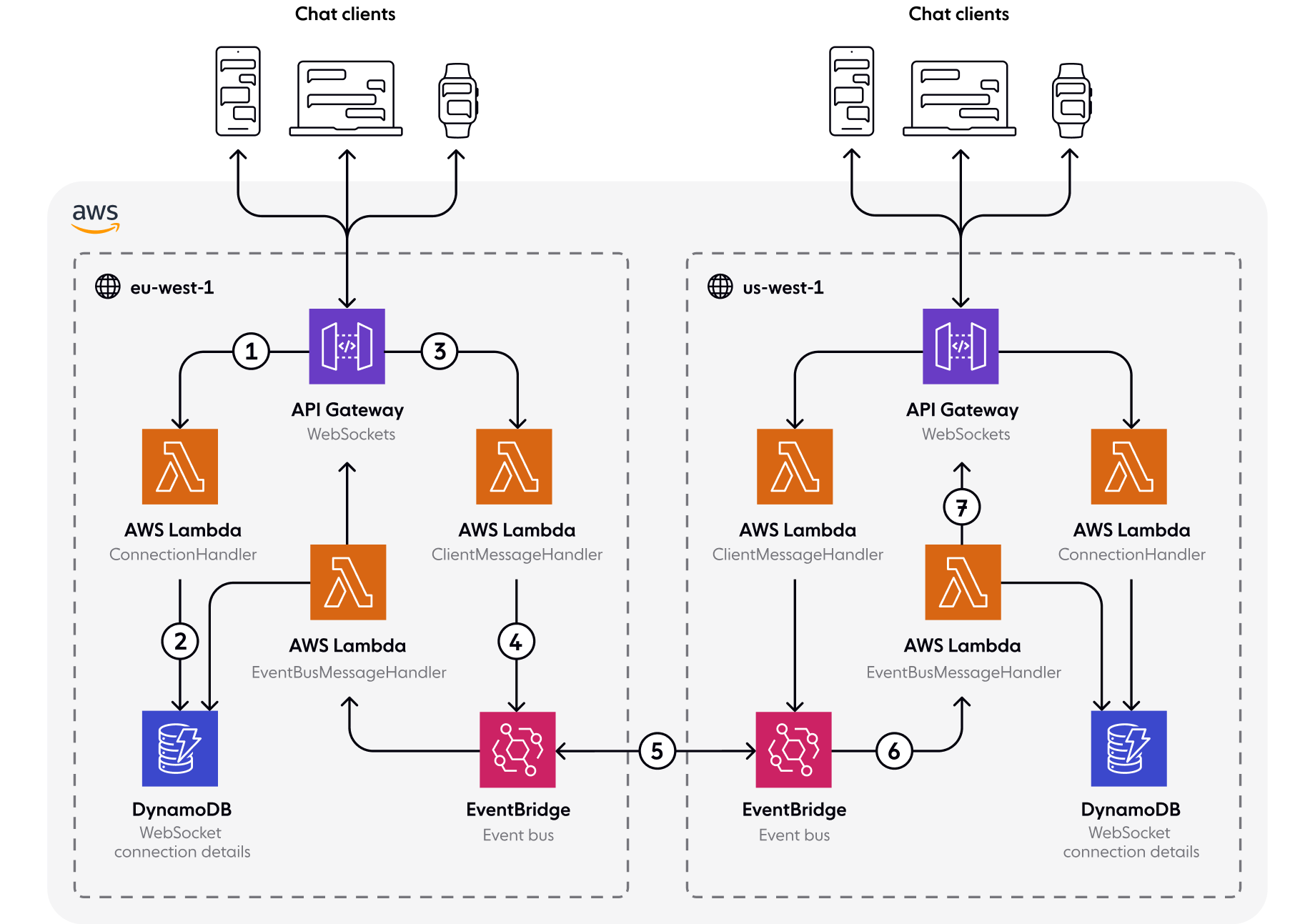

That being said, even though AWS API Gateway itself is single-region, you can glue it together with other AWS services to create a serverless multi-region architecture, thus decreasing latency, and improving resiliency. Let’s see what this architecture might look like for a chat use case:

When a chat user opens a WebSocket connection to an AWS API Gateway WebSocket endpoint in a region, the service invokes an AWS Lambda function (

ConnectionHandler).The

ConnectionHandlerfunction stores the connection details in a regional DynamoDB database.When the user sends a chat message over the WebSocket connection, AWS API Gateway invokes the

ClientMessageHandlerLambda function.ClientMessageHandlerpublishes the chat message to AWS EventBridge, the event bus in our architecture.AWS EventBridge replicates the chat message data into other regions.

AWS EventBridge invokes the

EventBusMessageHandlerAWS Lambda function from every region and forwards the chat message.Each regional

EventBusMessageHandlerfunction receives the chat message and sends it to all relevant clients connected to the AWS API Gateway WebSocket endpoints in the respective region. Note that the function first uses the AWS SDK to query DynamoDB to identify the active connections it needs to forward the message to.

While a multi-region architecture is significantly better than a single-region one, it’s not a silver bullet. That’s because it comes with additional complexity - there are a lot of moving parts involved that you need to configure and orchestrate. You have to ensure that all the AWS components that make up your architecture behave as expected and interact with each other in an optimal way. Easier said than done when we’re talking about sustaining potentially millions of concurrent WebSocket clients connecting from all over the world. See, for example:

There’s no fallback transport

There are certain environments - for example, corporate networks with proxy servers - that block WebSocket connections. That’s why many WebSocket libraries and platforms offer a fallback transport capability, such as HTTP long polling or Server-Sent Events. Unfortunately, AWS API Gateway is not among them; there is no fallback provided for WebSocket APIs.

So, if you’re building WebSocket-based apps with AWS API Gateway, you have to accept that your apps won’t support older browsers, devices, or environments where WebSockets are blocked. Alternatively, you could try implementing your own fallback transport to work alongside AWS API Gateway WebSocket APIs. However, this is a non-trivial, expensive, and lengthy undertaking, especially if you want your fallback capability to perform well at scale.

Limited dependability

To meet user expectations and business needs, any large-scale system needs to be highly available and must provide strong guarantees around data integrity. So, what guarantees around availability and data integrity does AWS API Gateway offer?

AWS API Gateway provides a 99.95% uptime guarantee - which amounts to almost 4.5 hours of allowed downtime/unavailability per year. This SLA might not be reliable enough for certain use cases, where downtime simply isn’t acceptable, especially when it affects tens of thousands or even millions of concurrent users. For example, healthcare apps connecting doctors and patients in realtime, or financial services and banking apps that must be available 24/7.

Data integrity (message ordering and guaranteed delivery) is desirable, if not critical for many use cases. Imagine how frustrating it must be for chat users to receive messages out of order. Or think of the impact an undelivered fraud alert can have.

Unfortunately, AWS API Gateway does not provide strong data integrity guarantees in all circumstances. Likely, the users of your app will sometimes experience brief disconnections - for example, when they are switching from a mobile network to a Wi-Fi network. When this happens, AWS API Gateway doesn’t guarantee message continuity out of the box. It’s up to the developer to implement logic that ensures no messages are lost or delivered out of order upon reconnection.

Alternatives to Amazon API Gateway WebSocket APIs

While it’s great that AWS API Gateway removes the burden of having to manage and provision WebSocket servers, it comes with limitations that you need to be aware of. We hope this article has helped you understand what those limitations are, and what challenges to expect if you intend to use AWS API Gateway at scale.

Going beyond the aspects we’ve covered in this article, it’s worth pointing out that AWS API Gateway offers basic, low-level WebSocket APIs, with no additional functionality on top. Features like:

These features make building and delivering realtime experiences for end-users much faster.

It is ultimately up to you to decide if AWS API Gateway WebSocket APIs are the best choice for your specific realtime use case. There are, of course, AWS API Gateway alternatives you can explore to see if there’s a better fit. Some of them, like Ably, provide superior guarantees at scale and a richer feature set. See how AWS API Gateway compares to Ably.

About Ably

Ably is a realtime experience infrastructure provider. Our realtime APIs and SDKs help developers power multiplayer collaboration, chat, data synchronization, data broadcast, notifications, and realtime location tracking at internet scale, without having to worry about managing and scaling messy realtime infrastructure.

Find out more about Ably and how we can help with your realtime use case:

Discover the guarantees we provide, including <36ms median roundtrip latency, guaranteed message ordering and delivery, global fault tolerance, and a 99.999% uptime SLA.

Learn about our elastically-scalable, globally-distributed edge network capable of streaming billions of messages to millions of concurrently-connected devices.

See what kind of realtime experiences you can build with Ably and check out our chat apps reference guide.

Explore customer stories to see how organizations like HubSpot, Mentimeter, and Genius Sports benefit from trusting Ably with their realtime needs.

Get started with a free Ably account and give our WebSocket APIs a try.

Recommended Articles

Scaling Socket.IO - practical considerations

Learn how to scale Socket.IO in production: avoid sticky session pitfalls, handle connection limits, and manage realtime infrastructure under pressure.

Scaling SignalR: Available options and key challenges

Explore SignalR scaling strategies, Redis backplanes, Azure SignalR Service, and when to switch to a more scalable realtime infrastructure.