Most AI applications start the same way: wire up an LLM, stream tokens to the browser, ship. That works for simple request-response. It breaks when sessions outlast a connection, when users switch devices, or when an agent needs to hand off to a human.

The cracks appear in the delivery layer, not the model. Every serious production team discovers this independently and builds their own workaround. Those workarounds don't hold once users start hitting them in production.

Here's what breaks, and what the transport layer needs to handle.

The shift that creates the problem

Simple AI applications are synchronous. User sends a message, model returns a response, done. A dropped connection restarts cleanly.

Agentic applications aren't like that. They run in a loop: perceive the user's intent, reason with the model, act by calling tools or sub-agents, and observe the result. Then they go around again until the task is done.

A research agent might loop a dozen times over several minutes, calling APIs and querying databases. The user is present throughout, watching, waiting, potentially needing to redirect. The connection might drop mid-loop, the user might switch devices, or they realize mid-stream the agent is heading the wrong way.

That's a different problem, and one HTTP streaming wasn't designed to solve. The backend surviving and the session surviving are two different things. What's missing is a layer that treats the conversation as durable state: persisting across connections, devices, and participants.

Durable execution makes the backend crash-proof. Durable sessions makes what the user actually sees crash-proof. Most teams building agentic products need both.

What breaks in production

Tokens disappear and reconnects corrupt state. HTTP streaming delivers tokens once. A dropped connection loses them. Most workarounds handle full page reloads but not tab switches or mobile backgrounding.

Worse, naive reconnect implementations replay the same output and produce duplicates: fragments, repeated tokens, or an interface in an indeterminate state. The Vercel AI SDK makes the tradeoff explicit: its resume and stop features are incompatible. You can resume a dropped stream or cancel it, but not both. A full breakdown of what resumable streaming requires at the infrastructure level is here.

Users can't see what the agent is doing. The agent is running tool calls, checking backend systems, orchestrating sub-agents. From the user's perspective it's a spinner and silence. Users abandon tasks they can't see progressing.

There's no standard mechanism for surfacing intermediate results as first-class events on the session channel.

There's no way to interrupt. Once generation starts, the user is locked out. Interruption requires bi-directional communication on the same channel simultaneously, user input arriving while agent output is still streaming, without breaking state. One company disabled user input entirely during agent responses because the backend couldn't distinguish an intentional cancel from a dropped connection.

The agent keeps working after the user has left. No signal tells the agent the user closed the tab. Compute and token costs accumulate.

Presence is a live membership set showing who is active in the session. Agents use it to pause expensive operations when nobody is there and resume when they return.

Multiple agents collide. When two specialist agents are working on the same request, every intermediate update routes through the orchestrator. The orchestrator becomes a bottleneck: when it's relaying progress it doesn't care about, the architecture starts to fight itself. The multi-agent coordination post goes deeper on how this plays out with concurrent specialist agents.

Agents fail silently. Most infrastructure has no agent health mechanism at the transport level. When an agent crashes, a presence disconnect fires immediately, rather than waiting for a timeout inferred from a dead stream. Build on the wrong signal and recovery logic breaks under real failure conditions.

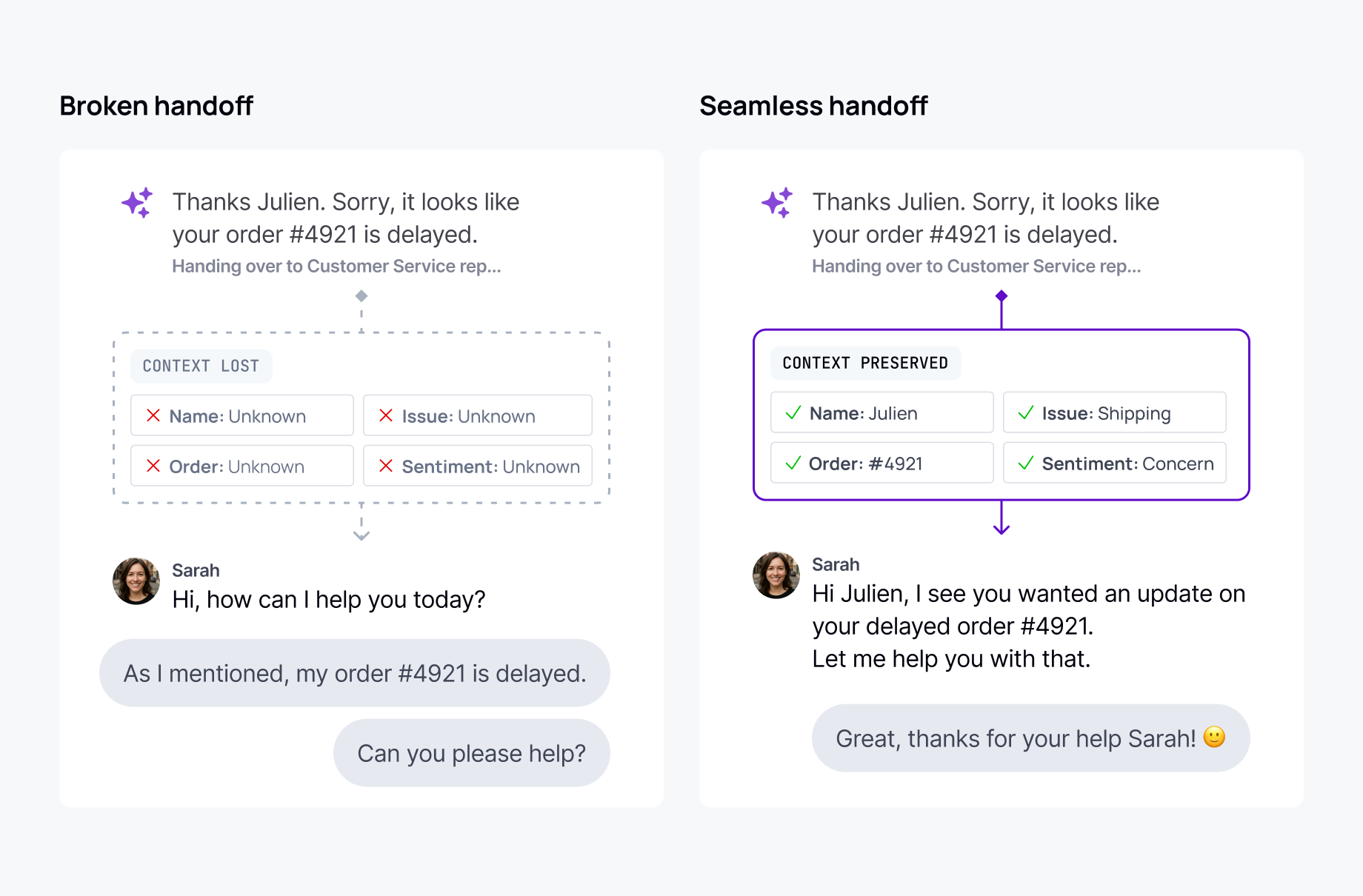

Human handovers lose context. When an agent escalates, most implementations open a different interface, summarize what happened, and hope the transfer works. The user explains their problem again. A unified channel where agents and humans can both participate addresses this: the human arrives with full history and picks up mid-thread.

There are no transport-level diagnostics. Model-level tooling shows what the model decided to do. Nothing shows what happened between the agent and the user's screen: whether a message arrived, whether a reconnection worked, whether delivery stalled. Debugging a failed session means stitching together server logs that rarely reconstruct what actually happened.

What the transport layer needs to handle

Resumable streaming. Output persists in the channel, not the connection. When a client reconnects, it rejoins from its last received position with no gaps and no duplicates. Mutable messages handle retry corruption: republish to the same message ID and the client sees clean updated state, not a second copy. Vercel built a pluggable ChatTransport interface specifically to support this pattern; TanStack AI shipped a ConnectionAdapter for the same reason. The ecosystem has diagnosed the problem and built the plug-in points.

Multi-device continuity. Session state lives on the channel, not any individual client. Any device subscribing gets the same history and live updates. The session follows the user, not the connection.

23 of 26 AI platforms evaluated in recent market research have no multi-device session continuity, including ChatGPT.

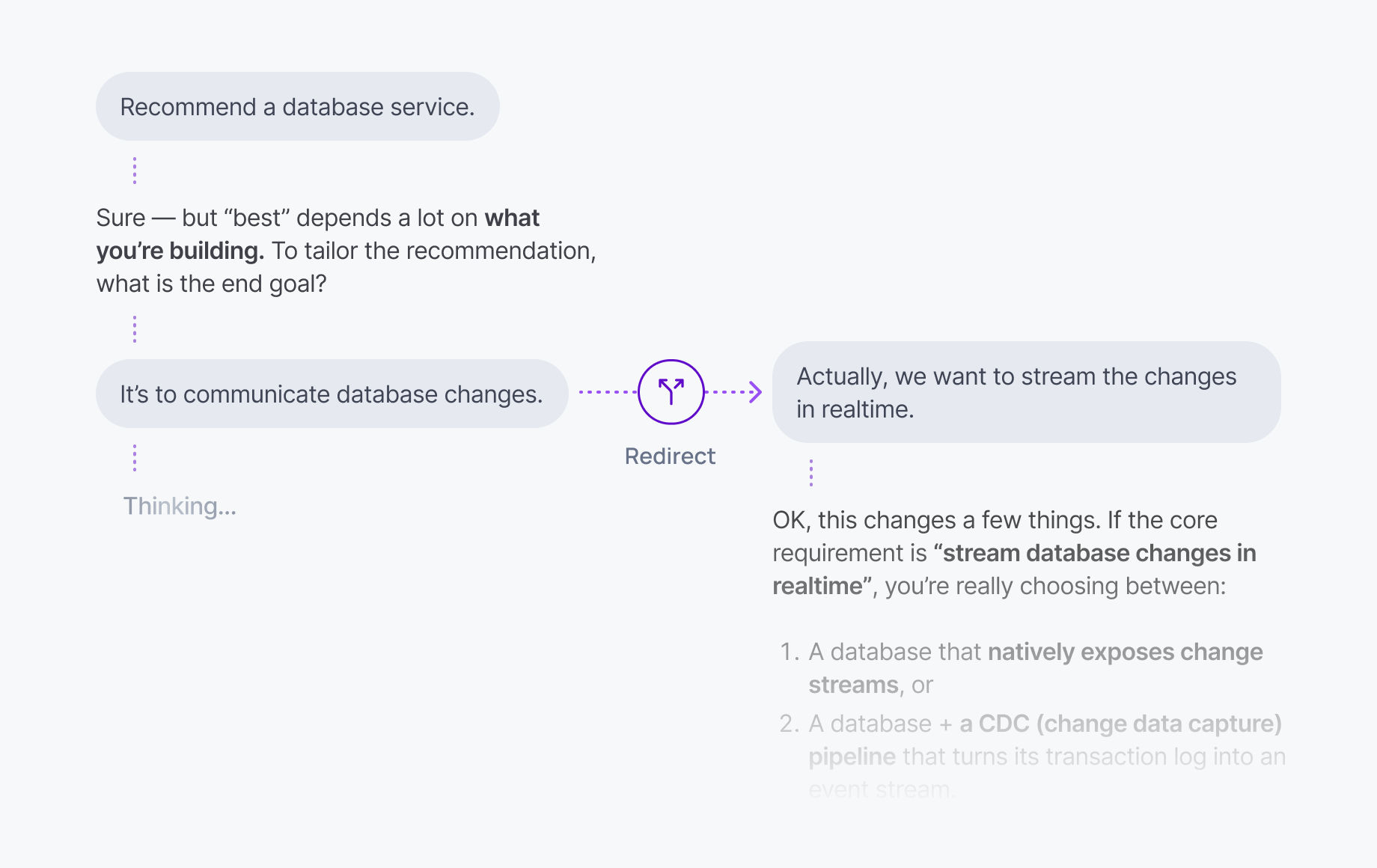

Bi-directional communication on a shared channel. User input and agent output flow on the same channel simultaneously. A redirect from the user arrives as an explicit signal while the agent is mid-stream, not as an ambiguous TCP side effect. The backend can now distinguish an intentional cancel from a dropped connection.

Progress as structured events. Agent reasoning steps, tool call progress, and intermediate results should be first-class events on the channel, subscribable independently of the main response stream. Specialized agents publish progress directly. The orchestrator stops relaying events it doesn't care about.

Presence. A live membership set for users, agents, and human operators. Agents make real decisions based on it: pause when the user is gone, resume when they return. Crash detection is a presence event: when an agent disconnects, the event fires immediately.

Session-level diagnostics. Channel history serves as both the live diagnostic feed and the persistent audit record: structured, timestamped, and identity-attributed. This covers the delivery layer between agent and user, separate from model-level observability, and both surfaces matter in production.

The underlying principle

Each of these problems is tractable in isolation. Solving all of them together, without a dedicated infrastructure layer, is where engineering budget quietly disappears. None of it has anything to do with the AI product itself.

The workaround that seemed to hold breaks as soon as teams need cancellation, multi-device continuity, or human handover without a context break. The result is a growing layer of glue code that keeps teams away from the features they're actually trying to ship.

The category forming around this problem, durable sessions, is the session-layer equivalent of what durable execution did for backend workflows. The infrastructure requirement is the same: a layer built for the failure modes that actually occur, not workarounds patched onto infrastructure designed for something else.

Where Ably AI Transport fits

Ably AI Transport is a drop-in durable session layer that absorbs this complexity. Developers publish to a session. The infrastructure handles resumable streaming, multi-device continuity, presence, shared state, and bi-directional communication. No changes required to your model calls or agent orchestration.