The ‘exponential rise in data’ — in trends, numbers and a changed API landscape.

The world’s collective data consumption currently stands at 33 ZB. In more familiar numbers, one zettabyte (ZB) is equivalent to 1,024 exabytes or 1,000,000,000,000,000,000,000 bytes.

According to the whitepaper Data Age 2025, published by the IDC, by 2025 the amount of data consumed will rise more than five-fold to 175 ZB.

What do these numbers mean in practice?

- People’s daily digital interactions will increase from today’s average of 750 to an average 5000 by 2025, according to the IDC.

- ‘Exponential’ speaks to the pace at which data is accumulating: whereas data previously doubled every year, the sum of stored ‘knowledge’ will double every 12 hours by 2025, according to IBM.

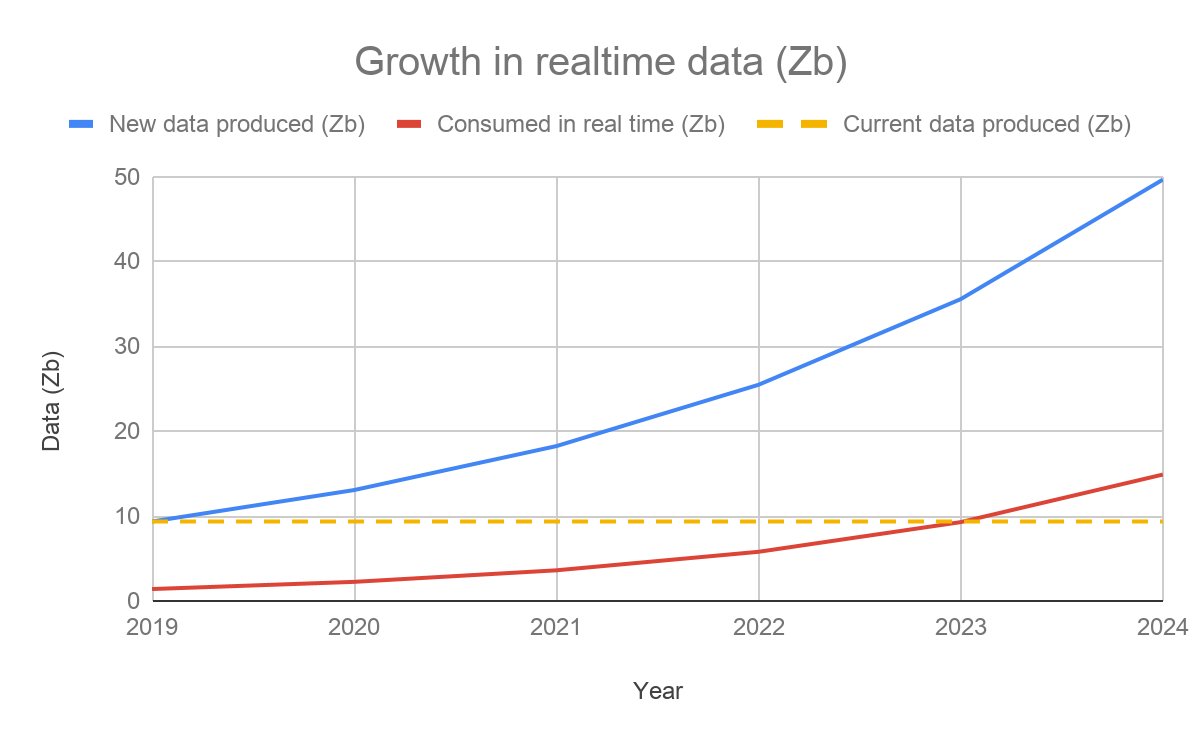

- The curve expressing the proportional rise of realtime data is also going up fast — by 2025 almost 30% of all data consumed will be realtime (see graph below). This means 1.5x the amount of data consumed in the world today will be obsolete unless distributed and consumed live.

The changed nature of data

The IDC report highlights important shifts in the way data is produced, transferred and used. Whereas before the data economy’s main source was consumer-creators of data (i.e. the value-creators behind Instagram/ WhatsApp/ Facebook) the coming years will see a shift towards business-creators. While these businesses will still want to share information with individual consumers, increasingly, target recipients of this data will be other businesses and/ or sets of devices supporting business processes. By 2025 60% of the world’s data will be produced by businesses.

The rise of realtime, and businesses creators are two manifestations of much-talked about ‘megatrends’. Consumer appetite for realtime apps and services, tracking of Ubers, public transport, on-demand food deliveries and so on, will fuel some of the realtime data economy. However, most of this data — up to 95%, according to IBM — will be produced by a proliferation of IoT sensors, controlling a variety of newly automated industrial, business and P2P processes. According to a recent Lightbend study, real-time stream data processing systems for machine learning and AI applications jumped by 500% over the past two years — and will continue a sharp upward curve.

A story of 5 acronyms — megatrends fuelling the data shift

IoT

The proliferation of IoT connected devices (a projected 75bn IoT devices come online by 2020) and investment in their development ($60trn over the next 15 years) is such that, just as ‘tech companies’ ceased to exist as a concept when all organizations adopted tech, Internet of Things might cease to be a ‘thing’ when the majority of B2B and industrial processes are powered by data-emitting sensors.

Due to the fact conditions indicated by one IoT device determine the reaction of another, and eventually the overall functioning of a whole set of devices (in AVs, for example, or in other automated processes), their functioning is reliant on information about constantly changing environmental conditions. Data needs to be sent, received and processed in real time, or it loses its value. Realtime APIs will create a growing branch of the API economy, making it possible for businesses to easily integrate, monetize and base services around data streams, reacting to events as they occur, and using this data before it becomes obsolete.

AI

Artificial intelligence and machine-learning essentially describe different, creative ways to use data — again transferred through APIs. Broken down, processes both in AI and ML rely on collecting, moving, storing, exploring, transforming, aggregating, labelling, a/b testing and optimizing data. AI can be pre-programmed to enable machines to carry out specific tasks and has already replaced junior workers on repetitive data identification tasks in law and healthcare. To illustrate, while pigeons can identify cancerous mammograms as well as humans, AI does a significantly better job — with 99% accuracy. AI is already using realtime technology to identify health issues and solutions, notably in the fields of diabetes and neurology. According to Juniper research, cross-sector annual global spending on AI will reach $7.3 bn by 2022.

ML

ML goes one step further than AI, giving machines access to data and letting them learn independently, based on patterns and repetitions. For example, in finance, ML can make realtime decisions on automated, short-term trading strategies involving a large number of securities. The computer uses trading algorithm for sets of securities on the basis of quantities like historical correlations and general economic variables. Based on the results of these decisions, ML automatically improves future processes. As data within all industries starts to become available in real time, it’s likely both AI and ML processes will rely on smart, industry-wide implementation of realtime streaming APIs to source, use and transfer this data, keeping users of this technology ahead of the game.

5G

5G has been subject to much hype, but now, according to ZDNet’s coverage of MWC 2019, the rhetoric has matured. 5G makes it possible to meet consumer expectations around fast communications, downloads and realtime interactions, with connections that are up to 100 times faster than 4G. But the true significance of increased bandwidth is the improved connectivity it enables between devices. As ZDNet commentators write, rather than being discussed as ‘sexy’ standalone technology, “this year, 5G was discussed in combination with AI and IoT”. In the 5G, AI and IoT ‘trinity’, 5G is serves as the infrastructure ‘enabler’ for the other trends. 5G supports the data-heavy demands of the realtime, AI, ML and IoT-driven internet. As more products, services and processes rely on data transfer driven by the above-mentioned acronyms, we can expect a corresponding proliferation of realtime APIs, all of which require realtime API streaming and management infrastructure. Another acronym — AVs — illustrates this.

AVs (and others)

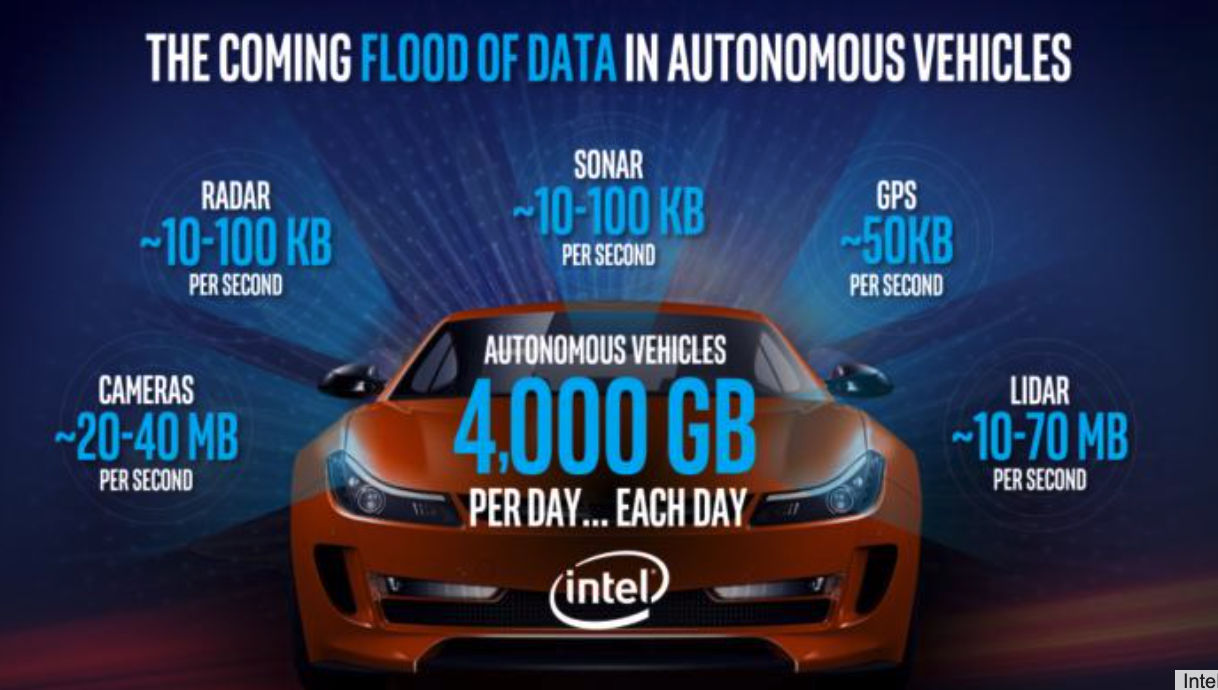

The perfect storm of the maturation of IoT, AI, ML and 5G make AVs one of several phenomena that will transition to become a normal part of everyday life. IoT sensors transmit and receive data — in real time — about the live environment the vehicle is navigating. Supported by 5G networks, AI algorithms control (again in realtime), how the vehicle responds, ML processes the data and constantly improves how the vehicle reacts.

The implications on realtime data transfer infrastructure are significant. Each vehicle relies on data-heavy transfer processes (see diagram below) resulting in 4,000 GB of realtime data transferred daily.

Fast forward 10 years when the world’s 1.4 billion cars are replaced by AVs (according to some sources). In a city like London, if its 2.6m vehicles become driverless, realtime infrastructure requirements stand at 10,400,000,000 GB data per day and 120,370 GB per second, as a minimum, mission-critical requirement for keeping traffic moving.

Are businesses ready?

AVs represent just one part of one industry that we can expect to see automated. Manufacturing, transport, supply chain, medicine and others all increasingly rely on realtime updates from data-emitting sensors. Many of the megatrends we have heard so much about, when they manifest themselves as part of everyday life, support a growing realtime API economy. Shared between multiple businesses, individuals and organizations, realtime APIs will help ensure processes are improved, and the wider process of realtime-based innovation accelerates.

Ably’s infrastructure is one of several Data Stream Network providers (other notable examples include PubNub, which recently received a $23m investment from IBM to develop its IoT offering), entering the market to solve a potential technological bottleneck in the process. According to the same Lightbend study, some of the most often cited challenges to implementing fast data are developer skills (cited by 31% of survey takers); complexity of tools, techniques, and technologies (30%); choosing the right tools and techniques (26%); difficulty making changes (26%); and integration with legacy infrastructure (24%).

What do these numbers mean in practice? Whether they want to reap the benefits or merely tap in to the 25% of the data economy that will be consumed in real time by 2025, organizations have to spend significant engineering resource to build pair-wise integrations between numerous realtime APIs. These streaming APIs will have to be able to operate at scale, supporting sudden, unpredictable bursts in usage from various, globally-distributed sources.

The ‘integration with legacy infrastructure’ aspect is also important. Protocols and platforms for realtime data sharing are currently fragmented. At present, businesses have to rely on custom-built streaming solutions, specific to each API or business producing the data. This ensured, up until now, realtime data streaming has generally been the preserve of organizations with large engineering teams. And even then, API management solutions that would enable easy integration and proliferation of streaming APIs are — as yet — virtually non-existent.

Based on AWS bandwidth pricing, applied to IDC realtime data predictions, the new market for raw realtime data will be worth $750bn. The opportunity for capitalizing on value-added realtime data streams represents significantly more.

Ably is a global cloud network for streaming data and managing the full lifecycle of realtime APIs

To find out about the current state of data-transfer infrastructure, the development of the new realtime data economy, and how your organization can turn data streams into revenue streams, talk to Ably’s tech team.

More information about the past, present and future of the API economy is available to read in an article based on a talk by Ably’s Founder and CEO, Data is no longer at REST.

Details of how engineers have overcome numerous obstacles related to realtime data sharing at scale are available to read on the Ably Engineering blog.