HTTP streaming – the default transport underneath every major agent framework – was never designed for sessions that survive a tab close or hand off cleanly between participants. Two failures surface consistently in production CX products because of this. Both generate support tickets about conversation state and prompt quality. Both trace to the transport layer.

The scenario that illustrates them: a customer contacts support about an order that's partially shipped and partially stuck. The AI agent works through it – checks the order, identifies the split, and starts a resolution path. Five minutes in, the customer closes the tab to find their order confirmation email. They reopen support. The session is gone. They re-explain from scratch.

The agent picks the thread back up. A few turns later, it hits an authorization limit and escalates to a human. The human joins and asks: "Can you tell me what's happening with your order?" The AI had the full picture. None of it transferred.

Two failures, one session. The agent frameworks built on top of HTTP streaming inherit its constraints, and no amount of prompt tuning or context retrieval logic reaches the root cause of either.

This article explains what each failure actually is, why the common fixes don't work, and where the architectural fix lives.

Why customer support is a harder transport problem

Most AI applications are point-to-point: one user, one AI agent, one connection. Session state lives in that connection. This works because nothing changes mid-session – the same client stays connected for the duration of the exchange.

Customer support breaks this assumption in two specific ways. Customers interact across devices and over extended windows, not in single continuous sessions from a fixed endpoint. And the conversation involves multiple participants – the customer, an AI agent, a human agent, and often a supervisor watching live – who join and leave at different points.

The session belongs to the conversation, not to any individual connection or participant.

Current AI infrastructure doesn't reflect this. Every major agent framework – Vercel AI SDK, LangGraph, CrewAI, Pydantic AI – uses HTTP streaming as its default transport. HTTP streaming is point-to-point by design: one client, one connection, active for the duration of the exchange. That assumption holds for a simple chatbot. It breaks the moment the session needs to survive participant changes.

The two failures that surface most consistently in production CX products both trace back to this constraint.

Failure 1: the session doesn't survive a tab close

HTTP streaming and SSE maintain state in the connection. When the connection closes – because the user closed a tab, switched networks, or closed their laptop – the state is gone.

Most teams discover this and reach for a workaround: for example, a Redis buffer between the AI backend and the client. The buffer stores recent tokens so the client can reload without losing the last response. It handles full page refreshes.

It doesn't handle tab switches, where the new tab has no reference to the previous session ID. It doesn't handle device switches, where a mobile client has no mechanism to identify itself as the same participant. And it doesn't handle background task delivery, where an agent completes work while the user is away and needs to reach whoever reconnects.

The example of the Redis workaround covers one failure mode and leaves the others open.

The misdiagnosis that keeps teams on this path is that the failure presents as a state persistence problem. Customers report losing their conversation. Product managers file tickets about session continuity. Engineers look at where state is stored and add more durable storage.

The constraint is elsewhere. The session layer needs to decouple from the connection, so any authenticated participant can join and replay from offset – regardless of device or reconnect path.

Ada rebuilt their entire transport stack over 18 months because incremental fixes on this architecture kept accumulating. ActiveCampaign built custom state management that carried tech debt from the first week. Backline built approximately 30 microservices for message delivery before reaching the same conclusion: the workaround is not the architecture.

23 of 26 AI platforms evaluated in our recent research have no multi-device session continuity, including ChatGPT. This is not a feature gap waiting to be filled in the next release. It is a structural consequence of building on HTTP streaming, which has no native concept of a session that persists independently of any single connection.

Failure 2: human escalation is a transport event

When an AI agent in a CX product escalates to a human, a new participant joins a session already in progress. What happens to session state at that moment determines whether the handoff works.

The common implementation looks like this. The AI agent closes its connection and routes the session ID to a queue. A human agent opens a new connection and pulls the transcript from a database. The human reads back, understands the context, and responds. The customer waits.

This works when it works. It fails in three specific ways that matter in production.

First, the transcript pull is asynchronous – the human may start responding before the full context has loaded, particularly under queue pressure. Second, the transcript is a historical record, not a live session. The human has read access to what was said but no visibility into what the AI did after the transcript was exported. Third, the handback is usually a separate implementation entirely – often manual, with no infrastructure support.

The most common symptom: the human agent opens the session and asks, "Can you describe the issue?" The customer has already described it in detail to the AI. The experience is: the AI wasted my time and the human ignored it. Teams diagnose this as a workflow failure – the agent should have read the transcript before responding – and update their playbook.

The root cause stays unaddressed.

The framing that clarifies both failures: every transition in a support conversation – AI to human, human back to AI, supervisor entering without taking over – is a transport event. A participant joins or leaves the session. If the session is tied to a connection rather than to the conversation itself, each transition requires a state transfer: export, import, and re-establish. Each transfer is a point where context drops, arrives late, or never makes it.

Warm and cold transfer patterns both require the session to be addressable by multiple participants – simultaneously for warm transfer, sequentially for cold. Warm transfer means the AI stays briefly while the human joins, introducing context before dropping off. Cold transfer means the AI disconnects and the human picks up with full history immediately available. Both require a shared, persistent session that any authorized participant can join and replay from a known offset.

Point-to-point HTTP streaming provides none of that. Each participant requires its own connection, and there is no shared session both can address. Warm transfer becomes a live context-passing hack: the AI summarizes what it knows and sends that to the human before closing. Cold transfer becomes a transcript export: state is serialized, queued, and pulled by the human agent on connect. Each pattern introduces the same failure modes – asynchronous load, gaps for in-flight events, no path to hand control back – because the underlying model hasn't changed. The plumbing that makes these patterns work at all is where most of the engineering time in CX products goes.

Why both failures get misdiagnosed

The pattern is consistent. Engineers investigating a session that reset on a tab close look at what state was lost and where it was stored. The answer is usually "in the connection," and the fix is usually "store it somewhere more durable." Databases get added. Caches get extended. The session keeps not surviving for new reasons, because the transport assumption has not changed.

Escalation failures produce a different response: more infrastructure. Teams build custom state-passing logic between agents, coordination layers, transcript export pipelines. The session still breaks at each transition because the underlying constraint – each participant requires its own connection, with no shared session both can address – hasn't changed. One AI customer support team I spoke to disabled user input entirely during agent responses because there was no reliable way to handle interruption on a one-way stream. That's a product-level workaround for a transport-level problem.

Both failures share the same origin. The session infrastructure handles single-participant, single-connection interactions. Customer support adds participants, transitions, and multi-device access patterns this model wasn't built for. More storage, more coordination logic, and more custom plumbing keep the workaround running longer. But the transport assumption underneath hasn't changed, so the failures keep finding new ways to surface.

Where the fix actually lives

The architectural shift that addresses both failures is the same: decouple session state from the connection and make it a property of the conversation.

A session should be addressable by any authenticated participant – the customer on any device, the AI agent, a human agent taking over, a supervisor observing. Any participant should be able to join, replay from a known offset, and see current conversation state without a bespoke state transfer at each transition. When a human agent joins, they get the full history – not a transcript export, but live access to the same session channel the AI has been publishing to.

The concept has a name now: durable sessions. The category is forming across the ecosystem independently. Vercel built a pluggable ChatTransport interface specifically so external transports can own session persistence. TanStack AI shipped a ConnectionAdapter for the same reason. These are explicit acknowledgments that transport architecture sits outside the scope of frameworks focused on orchestration and UI rendering. The session layer is a separate problem requiring a separate solution.

Architectural implications for CX engineering teams

Both failures trace to a transport assumption that most teams haven't explicitly made – they inherited it from the frameworks they're building on. The question worth asking is not "how do we fix context loss?" but "what does our session model actually own, and what is it missing?"

For teams still in design: the decisions that matter most here get harder to reverse once you've shipped. The Redis buffer pattern will handle your demo and early traffic. What it won't survive is a customer on mobile picking up a conversation they started on desktop, or a human agent joining a session mid-flight and needing live state rather than a transcript. If those scenarios are in your product roadmap – and in CX they almost always are – the transport architecture is worth designing around a session abstraction now rather than bolting one on later.

For teams diagnosing production failures: the two failures in this article show up as different-looking problems. Session loss looks like a persistence bug. Escalation failures look like a workflow gap. Both get routed to product and engineering as separate tickets with separate owners. The diagnostic question that cuts through both: does your session survive a participant transition, or does each transition require a state export and reimport? If the latter, you're not looking at two unrelated bugs. You're looking at the same transport constraint expressing itself in two places.

For teams in regulated industries or enterprise sales: the conversation changes. Healthcare, financial services, and enterprise support don't treat session continuity as a UX concern – they treat it as an audit requirement. Every participant transition needs a timestamped, identity-attributed record. Delivery ordering matters when multiple backend services write to the same conversation. Presence-aware cost controls matter when enterprise contracts include SLA clauses. A transport layer assembled from Redis buffers and transcript exports can't provide these. They require infrastructure with session identity, actor differentiation, and delivery guarantees as foundational properties, not add-ons.

The session layer as the unit of ownership

The architectural principle that unifies both fixes: treat the session as a durable unit, not the connection. A durable session persists independently of any single connection, device, or participant. Tab close, device switch, escalation, handback – each is a transport event on the same session, not a state transfer between separate systems. That framing resolves both failures at the root rather than at the symptom. It's also the shift the ecosystem is converging on: Vercel and TanStack both built explicit plug-in points for external session transports because this layer sits outside what framework-level tools are designed to own.

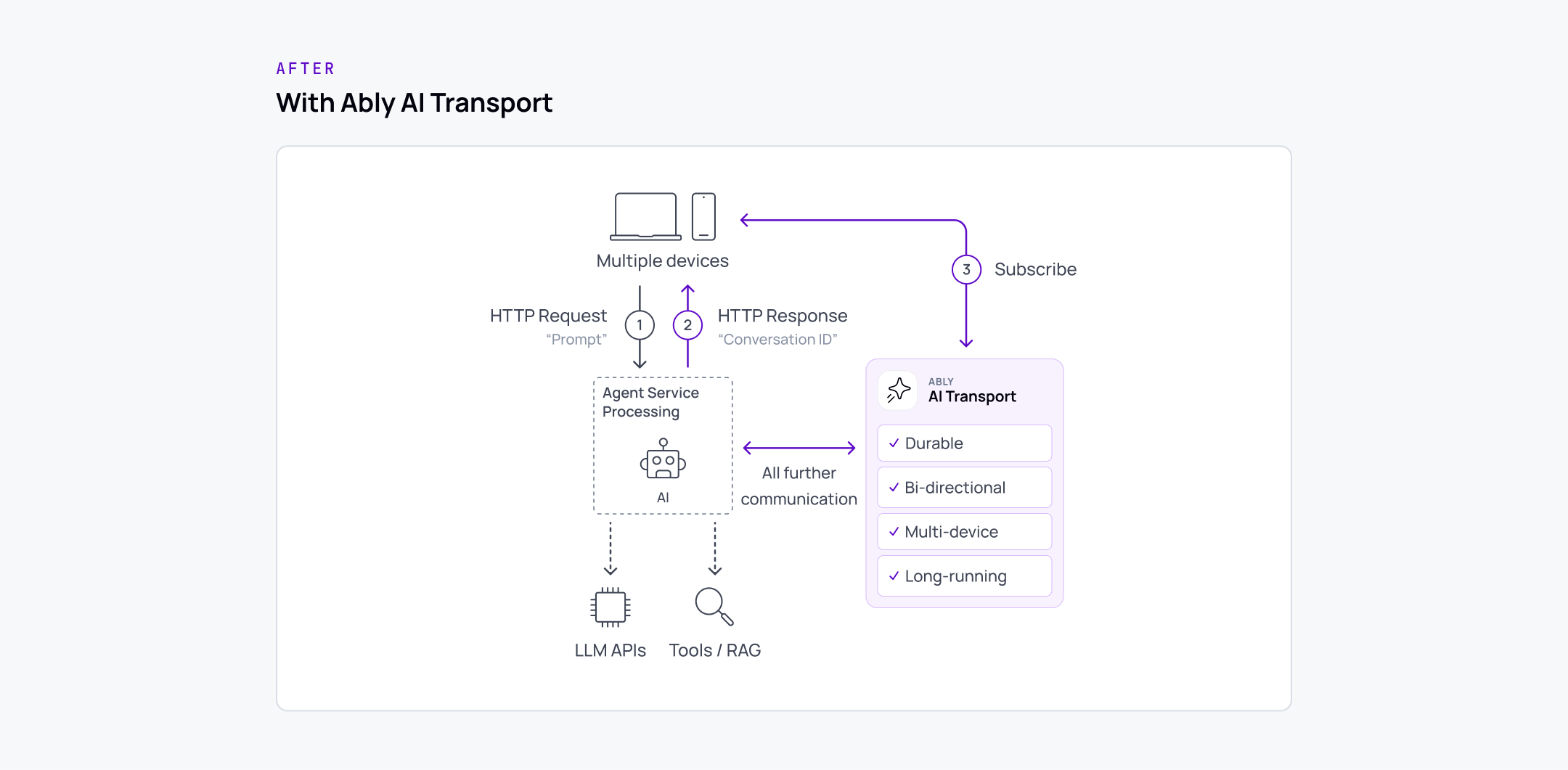

Ably AI Transport is built on this model. It provides a persistent, addressable session channel that any participant – customer, AI agent, human agent, supervisor – can join, replay from offset, and publish to. It works alongside any agent framework and any LLM provider, without requiring changes to how the agent or the UI are built. The durable session infrastructure is the piece teams no longer have to build themselves.