TL;DR Vercel AI SDK handles the application layer, but explicitly delegates transport to external providers. The default SSE transport breaks in production in predictable ways. Vercel’s own ChatTransport interface exists specifically so you can replace it.

Vercel AI SDK covers model calls, orchestration, and UI rendering. For simple chatbots, it works end to end. The trouble comes when agents run long tasks or users are on enterprise networks: streaming that works in development fails in production. You'll see the same failure modes across every production AI stack, not just Vercel's.

The failure modes are consistent and documented. Vercel has made transport pluggable. The question is what to put there.

Why SSE breaks in production

useChat defaults to SSE: a one-way HTTP connection that streams text events from server to browser. On a stable network, it works. Production is where the same problems show up every time.

Proxy buffering and “fake streaming”

Enterprise proxies treat SSE as a single HTTP response and hold it until the connection closes. All tokens arrive in one burst; users see nothing, then everything at once. That's "fake streaming". AWS ALB terminates idle connections after 60 seconds by default. Nginx buffers responses unless you explicitly disable it. You have no control over any of this on your users' networks.

No stream recovery on disconnect

When an SSE connection drops - tab switch, mobile backgrounding, network change - the stream is gone. There is no protocol-level resume; the client has no record of how far generation got. Vercel’s resumable-stream covers page reloads only; tab switches, device switches, and mobile backgrounding are all out of scope.

It also has a documented incompatibility with abort (issue #8390).

One connection, one device

SSE is scoped to a single HTTP request from a single browser tab. A second tab gets an independent session; a phone gets nothing. 40-60% of streaming sessions involve a tab switch. None recover automatically.

Cancellation is ambiguous

HTTP streaming is unidirectional. There is no signal path from client to server on the streaming connection. Sending an AbortController signal via a separate HTTP call often arrives at a different server instance than the one streaming. The server continues generating tokens regardless; users get billed for work they canceled.

Serverless timeout ceilings

Vercel serverless functions have hard limits: ten seconds on Hobby, 15 on Pro by default, 300 at maximum. Edge Functions must begin streaming within 25 seconds. For long-running agents, these are not edge cases: they are the expected runtime.

How Vercel architected the fix point: ChatTransport

In AI SDK 5 (July 2025), Vercel made a deliberate architectural choice: ship a pluggable transport interface rather than bundle one. The ChatTransport interface decouples useChat from its transport implementation, so you can swap in your own delivery mechanism without touching application code:

const { messages } = useChat({

transport: new MyCustomTransport()

});Only the delivery mechanism changes. Agent code and UI rendering stay as they are.

The reasoning is structural. Vercel’s serverless platform cannot host persistent WebSocket connections. Their own infrastructure docs confirm this.

Rather than ship a transport tied to their platform, they made transport pluggable and pointed you toward external providers. Their knowledge base lists recommended WebSocket providers for AI applications.

ChatTransport points at something wider than Vercel’s platform constraints. The transport problem isn’t specific to any one framework. It’s a gap that appears across every production AI stack, regardless of how the agent side is built.

The gap in the stack

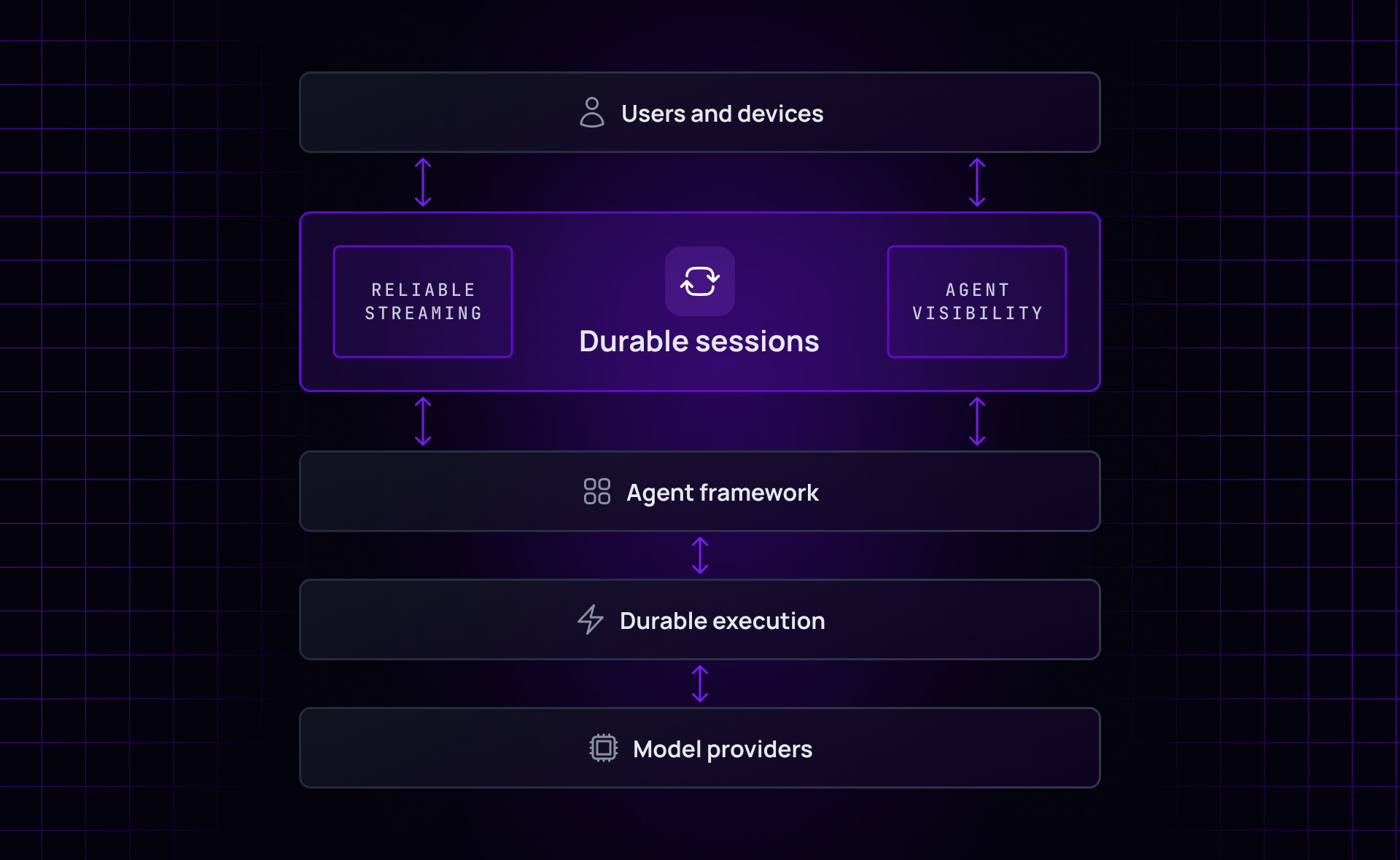

Map the full AI application stack and one layer is consistently missing. No framework provides a stateful session between the agent and the user that survives the conditions production actually imposes.

Agent frameworks, UI primitives, databases, and execution engines each cover their own layer. What none of them handle is the combination of stream resumability, multi-device delivery, bidirectional signaling, and agent health detection - in a single layer. Not without you building that glue yourself.

This is what “durable sessions” describes: a session layer that outlives any single connection, device, or participant.

This gap isn’t a Vercel problem to solve. ChatTransport is Vercel’s way of making it someone else’s job, deliberately and correctly.

Your options for filling the gap

Teams keep ending up in the same four places.

1. Redis buffer

The most common first step: a Redis instance between the AI backend and the client. The agent publishes to Redis; a server process forwards to the client. This decouples generation from delivery and solves the simplest reconnection case.

It works, and plenty of teams ship it. But Redis Pub/Sub provides no delivery guarantees. A message published while a subscriber is disconnected is lost. There is no built-in history for catch-up on reconnect.

Multi-device fan-out, protocol fallback, and presence all require custom work on top. Vercel’s own resumable-stream uses this pattern and explicitly documents what it doesn’t cover. Right for simple cases; expensive to extend.

2. Build your own WebSocket layer

Many teams go here next: a persistent connection on a dedicated server or container, outside Vercel's serverless functions. This solves the one-way SSE constraint and gives you a bidirectional channel.

The cost is ownership. You’re now responsible for connection management, reconnection logic, message ordering, and scaling the WebSocket server independently of the rest of your infrastructure. Most teams that go this route end up having rebuilt most of a realtime platform. The irony tends to become obvious only after the fact.

3. Database with realtime subscriptions

Supabase, Firebase, Convex, and Neon all offer realtime query subscriptions. You can route AI streaming through them: write tokens to a database row, subscribe to changes from the client. It uses infrastructure you may already have and works at small scale.

The constraint is volume. Token streaming creates approximately 180,000 writes per hour per session. That hits single-threaded CDC bottlenecks quickly and adds write latency to every token.

A reasonable starting point for prototypes; not a long-term architecture at scale.

4. Purpose-built transport layer

A purpose-built transport layer provides the channel infrastructure the custom WebSocket build requires, without the ownership cost. The capabilities that matter:

Reconnects replay from the last offset - no lost tokens, no duplicate messages

One agent publish reaches every subscriber. Connected users get live streaming; tabs that reconnected get caught up automatically

Protocol is negotiated on the fly (WebSocket → HTTP streaming → long-poll), so it works on whatever network the enterprise allows

Cancel and steer signals travel back on the same channel in milliseconds, as an explicit message - not a TCP drop the server has to guess at

Agents register presence on the channel, so a crash shows up immediately as a disconnect event, not as a dead stream you have to poll to detect

The ChatTransport interface is the integration point for this approach. The relevant comparison points are delivery guarantees, offset-based history for catch-up, and protocol fallback, not raw WebSocket support alone.

Which path is right for your situation

Scenario | SSE sufficient? | What you need |

Simple chatbot, single device, stable network | Yes | Default useChat: don’t add complexity you don’t need |

“Streaming works locally but not deployed” | No | Protocol fallback: WebSocket → HTTP → long-poll, auto-negotiated |

Session continuity across tabs or devices | No | Channel-based fan-out with offset history; SSE can’t provide it |

Long-running agents (30s+) | No | Stateful transport with agent presence signals |

Human oversight of AI interactions | Partial | needsApproval handles user-side; org-side escalation requires a session layer |

Enterprise deployment | No | Proxy traversal, SOC 2, audit trail; Redis builds don’t scale here |

The AI UX challenge: where Ably fits

Getting AI products to work reliably for real users is the unsolved part. Experiences that hold up on enterprise networks, on mobile, across devices, with agents that run for minutes.

Vercel AI SDK handles orchestration, model abstraction, and UI. What it explicitly doesn’t handle is the session layer between the agent and the user. That layer needs to survive dropped connections, follow users across devices, carry bidirectional signals, and surface agent health without polling.

Ably AI Transport fills that gap. It gives agents and clients a durable session layer that SSE can't provide .It sits between the Vercel AI SDK application layer and the user, providing the durable session infrastructure that SSE can’t. Because Vercel built ChatTransport as a plug-in point, adding it is a transport swap.

The useChat hooks, agent code, and UI rendering stay exactly as they are.

Ably AI Transport integrates with the Vercel AI SDK to add durable sessions, multi-device sync, and bidirectional control to your chat application. Visit the Ably AI Transport overview, read the documentation, or sign up free

Ready to build? Get started with Vercel AI SDK.

Research basis: analysis of 300+ GitHub issues in vercel/ai repository; 31 Vercel Community Forum threads (65% unresolved); 35 Stack Overflow questions (40% unanswered); Hacker News, Reddit, and developer blog analysis, March 2026. GitHub issues cited: #6502, #8390. External sources: Vercel AI SDK 6 announcement; Vercel Functions Limits; Vercel KB: WebSocket support; ChatTransport docs.

Recommended Articles

Why Vercel AI SDK can't stream to multiple devices

SSE is a one-to-one connection. Delivering the same AI generation to multiple clients or devices requires a broadcast layer that SSE cannot provide.

Why AI chat history disappears between sessions

Vercel AI SDK stores messages in component state. When the page reloads or the user returns later, the history is gone. How to add durable persistence to AI applications.

Vercel AI SDK ChatTransport: implementing a custom WebSocket transport

ChatTransport in Vercel AI SDK 5 lets you replace the default HTTP transport with WebSockets. Application code, agents, and UI stay unchanged.